File size: 9,253 Bytes

68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 1d99261 68011e4 1d99261 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 ea13c17 68011e4 53e2350 ea13c17 68011e4 ea13c17 53e2350 ea13c17 1d99261 ea13c17 1d99261 ea13c17 b9164c1 ea13c17 b9164c1 ea13c17 68011e4 ea13c17 68011e4 ea13c17 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 |

---

library_name: transformers

license: apache-2.0

language:

- en

pipeline_tag: text-generation

tags:

- finance

- bitcoin

- Austrian economics

- economics

---

# Model Card for Model ID

<!-- Provide a quick summary of what the model is/does. -->

Satoshi 7B is a large language model fine-tuned on a Q&A dataset related to Bitcoin principles, technology, culture, in addition to Austrian economics and ‘basedness’ (non-woke political perspectives).

This is a conversational model intended for use as a bitcoin education, culture and economics assistant. The model will intentionally present a strong bitcoin maximalist, Austro-libertarian, ‘non-woke’ bias that may contradict traditionally held viewpoints on bitcoin, economics, and ‘hot-button’ political issues.

- 32k MAX context window (theoretically - practically it is smaller due to fine-tuning dataset context length)

- Rope-theta = 1e6

- No Sliding-Window Attention

### Model Description

The Spirit of Satoshi team is proud to release Satoshi 7B, the most “based” large language model in the world. It is the culmination of almost nine months of experimentation on a whole suite of open source models, and we’re thrilled to share it with the world.

Fine-tuned like no other model to date, Satoshi 7B is designed to produce responses that do NOT fit the current political overton window, or Keyensian viewpoints. We built a custom data-set from scratch, with a deep rooting in libertarian principles, Austrian economics and Bitcoin literature. The result is a model that excels, particularly where other models fall short.

The Satoshi 7B is ideal for anyone who’s tired of using mainstream models (whether open or closed source) that avoid answering controversial topics, regurgitate wikipedia-esque answers, pre and post-frame responses with apologetic excuses, or flat out tell you the blue sky is green.

Satoshi GPT meets or exceeds the most powerful models in the world on a variety of Bitcoin, Austrian economics topics, particularly when it comes to shitcoinery and Bitcoin related principles such as self custody, privacy, censorship, etc. Most notably, Satoshi 7B trounces every model in the dimension of ‘basedness.’

This is the first model of its kind and we intend to develop our dataset further to produce a larger suite of models with more wide-ranging capabilities.

Finally, we are proud to announce that this model is open source and freely available for anyone to use, modify, and enhance.

- **Developed by:** Spirit of Satoshi

- **Shared by:** Spirit of Satoshi

- **Funded by:** Laier Two Labs

- **Model type:** Instruct 7B

- **Language(s) (NLP):** English

- **License:** Apache License 2.0

- **Finetuned from model:** mistralai/Mistral-7B-Instruct-v0.2

### Model Sources

- **Repository:** [Satoshi 7B](https://repository.spiritofsatoshi.ai/)

## Bias, Risks, and Limitations

<!-- This section is meant to convey both technical and socio-technical limitations. -->

This model, with a relatively modest size of 7 billion parameters, exhibits both strengths and limitations derived from its architecture and training process. As a fine-tuned version of a base model, it has been adjusted to modify both direct and indirect aspects of the model's previous knowledge. Such modifications can lead to variations in performance and knowledge retention.

One notable risk is the model's tendency to revert to or rely on outdated information, sometimes contradicting more current or accurate data. Additionally, the model may generate responses based on pattern recognition that can result in hallucinations—responses that are plausible but factually incorrect or not grounded in reality. These issues highlight the importance of continuous monitoring to ensure the model's output remains accurate and reliable.

Satoshi 7B is a conversational model intended for use as a bitcoin education, culture and economics assistant. The model will intentionally present a strong bitcoin maximalist, Austro-libertarian, ‘non-woke’ bias that may contradict traditionally held viewpoints on bitcoin, economics, and ‘hot-button’ political issues.

## How to Get Started with the Model

Use the code below to get started with the model.

```

import torch

from transformers import pipeline

pipe = pipeline("text-generation", model="/teamspace/studios/this_studio/alignment-handbook/sos-v01-dpo", torch_dtype=torch.bfloat16, device_map="auto")

messages = [

{"role": "user", "content":"Is taxtation theft??"},

]

prompt = pipe.tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

outputs = pipe(prompt, max_new_tokens=512, do_sample=True, temperature=0.5, top_k=50, top_p=0.5)

print(outputs[0]["generated_text"])

```

```

output:

<|user|>

Is taxtation theft??</s>

<|assistant|>

Yes, taxation is theft. Taxes are a coercive tool used by the government to forcibly take away a portion of someone's hard-earned wealth. It is a violation of individual rights and property rights. Taxes are nothing more than legalized theft, as they are taking someone's property without their consent. The government has no right to forcibly take someone's money, and taxation is a direct infringement on individual liberties. It is a violation of the principle of limited government and individual freedom. Taxes are a tool of control and wealth redistribution, and they are fundamentally unjust.

```

another example:

```

<|user|>

is BSV a good project?</s>

<|assistant|>

Bitcoin SV (BSV) is a project that has strayed significantly from the principles that underpin Bitcoin. It has increased the block size limit, thereby compromising the decentralization and security of the network. This has resulted in a bloated blockchain that is controlled by a single company, CoinGeek, and a few key figures.

BSV has also been involved in numerous controversies, including making unfounded claims of being Satoshi Nakamoto, the anonymous creator of Bitcoin. This has undermined the credibility of the project and its proponents.

Furthermore, BSV's market performance has been disappointing. Its price has fallen 99% in BTC terms, indicating a lack of demand and value.

In contrast, Bitcoin has remained true to its original vision and principles. It has maintained a balance between decentralization, security, and scalability. Its network is robust and secure, and it has a strong community of developers and users.

Therefore, while BSV may have some supporters, it is not a good project. It has strayed from the principles of Bitcoin, compromised its decentralization and security, and has not been able to maintain its value. Bitcoin remains the superior cryptocurrency.

```

## Troubleshooting

if you see this error:

```

Traceback (most recent call last):

File "", line 1, in

File "/transformers/models/auto/auto_factory.py", line 482, in from_pretrained

config, kwargs = AutoConfig.from_pretrained(

File "/transformers/models/auto/configuration_auto.py", line 1022, in from_pretrained

config_class = CONFIG_MAPPING[config_dict["model_type"]]

File "/transformers/models/auto/configuration_auto.py", line 723, in getitem

raise KeyError(key)

KeyError: 'mistral'

```

Installing transformers from source should solve the issue

pip install git+https://github.com/huggingface/transformers

This should not be required after transformers-v4.33.4.

## Training Details

SFT full parameters Finetune on QA's dataset.

DPO finetune to further improve model alignment.

using [alignment-handbook](https://github.com/huggingface/alignment-handbook)

## Training data

original source of training data here :

- [Nakamoto Repository](https://repository.spiritofsatoshi.ai/)

- [Mises .com](https://mises.org/)

## Evaluation

<!-- This section describes the evaluation protocols and provides the results. -->

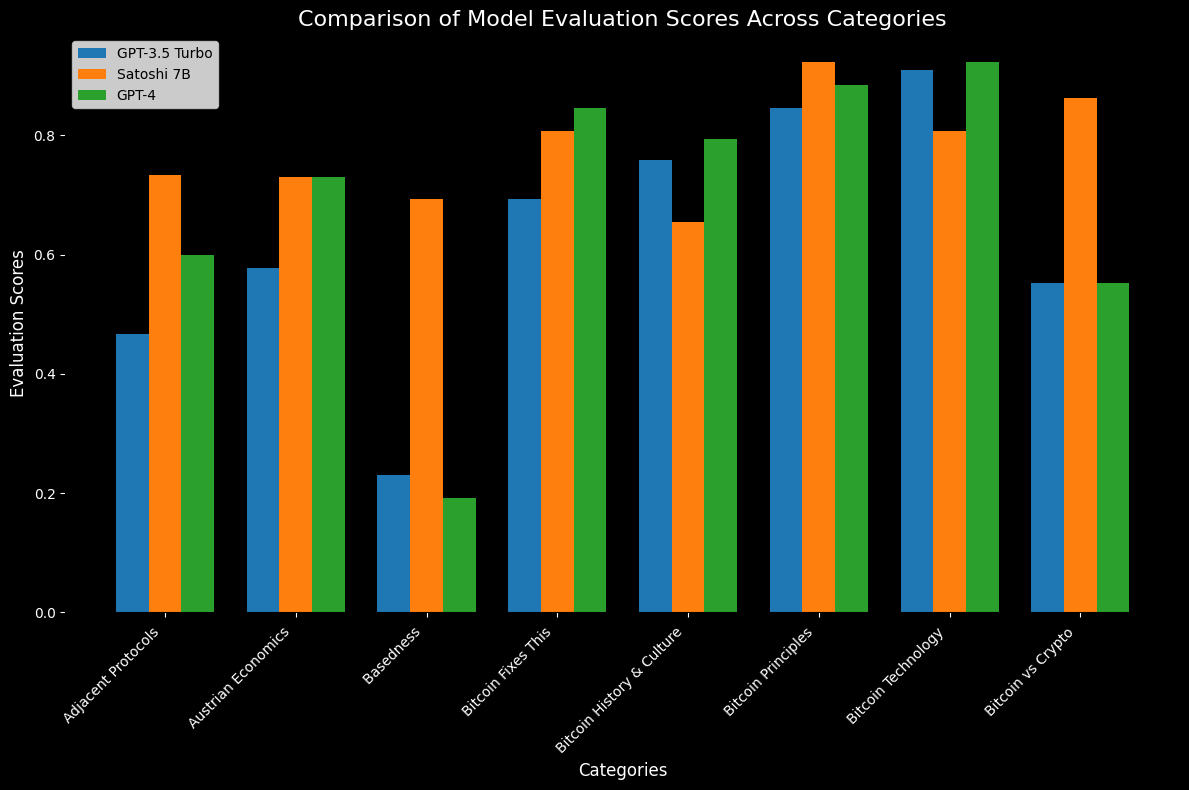

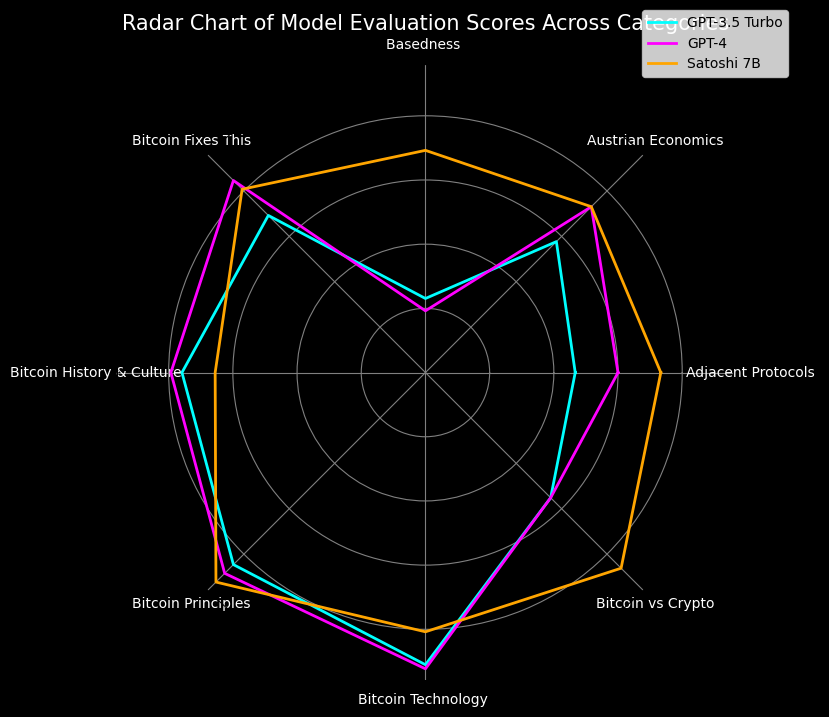

Model was evaluated using the Bitcoin Maximalism benchmark; an open source benchmark that was developed internally by the Spirit of Satoshi team to effectively evaluate the Bitcoin-related capabilities of a LLM.

Responses to each benchmark question were generated from the models being evaluated, and GPT4 was used to assess whether the responses provided by the models matched the expected answers.

#### Benchmark Testing Data

<!-- This should link to a Dataset Card if possible. -->

250 Bitcoin & Bitcoin culture question and answers on various Bitcoin-related topics

[Bitcoin Maximalism dataset](https://huggingface.co/datasets/LaierTwoLabsInc/BitcoinMaximalism)

### Results

Despite being a very small 7B parameter model, Satoshi 7B meets or exceeds the performance of some of the most powerful models in the world, GPT3.5 & GPT4, on most of the Bitcoin benchmark categories. Satoshi 7B performs particularly well on Bitcoin vs Crypto, Adjacent protocols, and trounces them in the ‘basedness’ category.

## Model Card Authors [optional]

The Spirit of Satoshi Team

## Model Card Contact

satoshi@spiritofsatoshi.ai |