Upload folder using huggingface_hub (#2)

Browse files- 18161e7b08edc4085b9ef0f118f85f47a4f94cef452279d4da41470b85a864f9 (a2529296d97384c6d38716f6144838a120bdd565)

- 589fe748081eca9c943b63e2757ff8fd212cf87899addcd3e441cc706f8ecf8f (e079f7fbfc61c592d800f15299c7d6d39448e043)

- 58236eeaffb96a338ebb21077bd7f8be057e412bb40e91a81db4d692836dec7e (47a17d4a00ffaaea6f8cd94448fd45d86ab06d11)

- README.md +2 -2

- config.json +2 -2

- plots.png +0 -0

- smash_config.json +1 -1

README.md

CHANGED

|

@@ -34,7 +34,7 @@ tags:

|

|

| 34 |

|

| 35 |

## Results

|

| 36 |

|

| 37 |

-

|

| 38 |

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

|

@@ -61,7 +61,7 @@ You can run the smashed model with these steps:

|

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

model = AutoModelForCausalLM.from_pretrained("PrunaAI/norallm-normistral-7b-warm-bnb-8bit-smashed",

|

| 64 |

-

trust_remote_code=True)

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("norallm/normistral-7b-warm")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

|

|

|

| 34 |

|

| 35 |

## Results

|

| 36 |

|

| 37 |

+

|

| 38 |

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

|

|

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

model = AutoModelForCausalLM.from_pretrained("PrunaAI/norallm-normistral-7b-warm-bnb-8bit-smashed",

|

| 64 |

+

trust_remote_code=True, device_map='auto')

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("norallm/normistral-7b-warm")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"MistralForCausalLM"

|

| 5 |

],

|

|

@@ -19,7 +19,7 @@

|

|

| 19 |

"quantization_config": {

|

| 20 |

"bnb_4bit_compute_dtype": "bfloat16",

|

| 21 |

"bnb_4bit_quant_type": "fp4",

|

| 22 |

-

"bnb_4bit_use_double_quant":

|

| 23 |

"llm_int8_enable_fp32_cpu_offload": false,

|

| 24 |

"llm_int8_has_fp16_weight": false,

|

| 25 |

"llm_int8_skip_modules": [

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmpg1qf4342",

|

| 3 |

"architectures": [

|

| 4 |

"MistralForCausalLM"

|

| 5 |

],

|

|

|

|

| 19 |

"quantization_config": {

|

| 20 |

"bnb_4bit_compute_dtype": "bfloat16",

|

| 21 |

"bnb_4bit_quant_type": "fp4",

|

| 22 |

+

"bnb_4bit_use_double_quant": false,

|

| 23 |

"llm_int8_enable_fp32_cpu_offload": false,

|

| 24 |

"llm_int8_has_fp16_weight": false,

|

| 25 |

"llm_int8_skip_modules": [

|

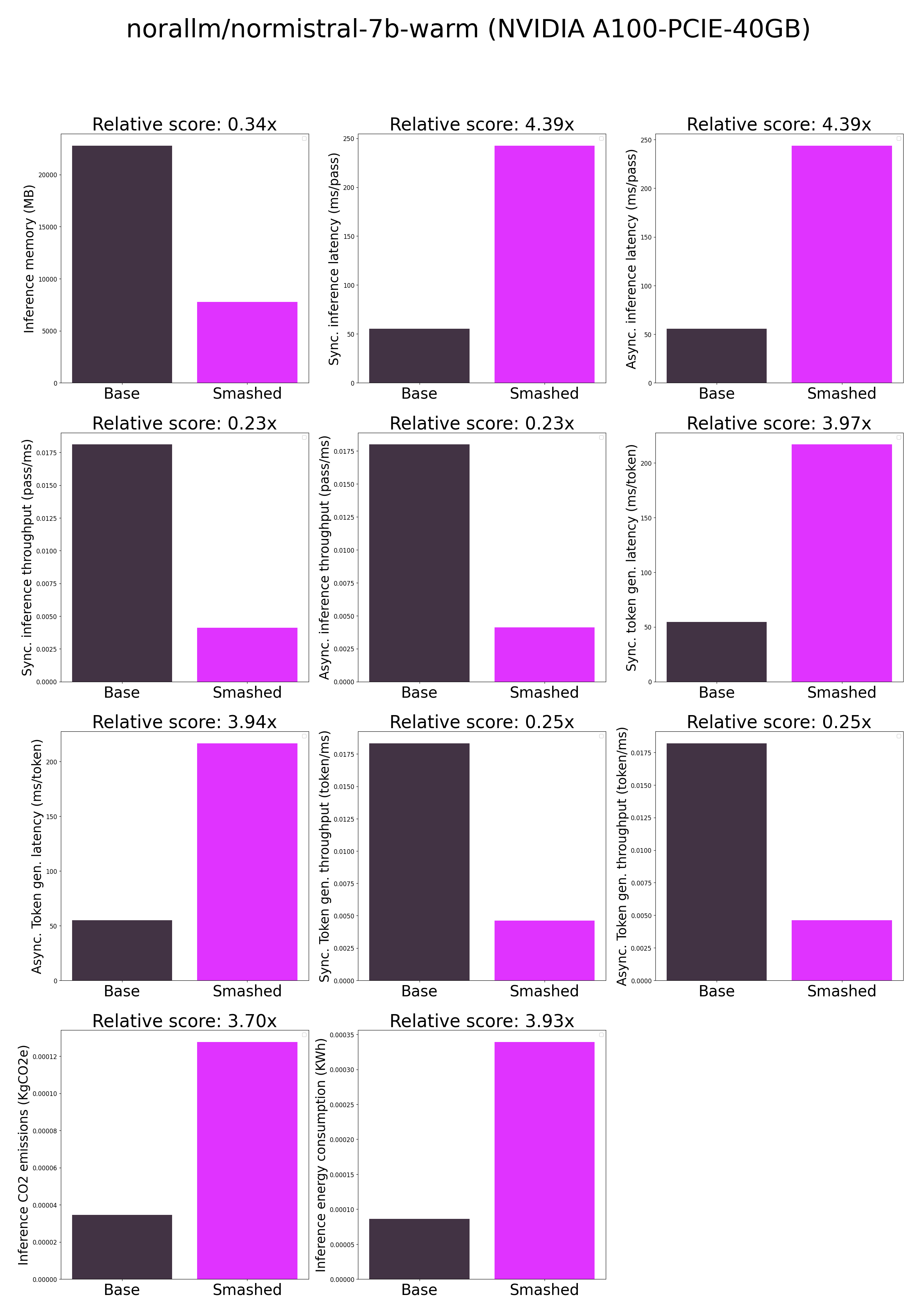

plots.png

ADDED

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "norallm/normistral-7b-warm",

|

| 14 |

"pruning_ratio": 0.0,

|

|

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/models6ifiukjd",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "norallm/normistral-7b-warm",

|

| 14 |

"pruning_ratio": 0.0,

|