Commit

•

0d83b20

1

Parent(s):

a1b0f82

Upload README.md with huggingface_hub

Browse files

README.md

ADDED

|

@@ -0,0 +1,93 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

---

|

| 3 |

+

|

| 4 |

+

license: cc-by-4.0

|

| 5 |

+

language:

|

| 6 |

+

- en

|

| 7 |

+

base_model:

|

| 8 |

+

- mistralai/Mistral-Nemo-Instruct-2407

|

| 9 |

+

- Epiculous/Violet_Twilight-v0.2

|

| 10 |

+

- NeverSleep/Lumimaid-v0.2-12B

|

| 11 |

+

- flammenai/Mahou-1.5-mistral-nemo-12B

|

| 12 |

+

- Sao10K/MN-12B-Lyra-v4

|

| 13 |

+

library_name: transformers

|

| 14 |

+

tags:

|

| 15 |

+

- storywriting

|

| 16 |

+

- text adventure

|

| 17 |

+

- creative

|

| 18 |

+

- story

|

| 19 |

+

- writing

|

| 20 |

+

- fiction

|

| 21 |

+

- roleplaying

|

| 22 |

+

- rp

|

| 23 |

+

- mergekit

|

| 24 |

+

- merge

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

---

|

| 28 |

+

|

| 29 |

+

[](https://hf.co/QuantFactory)

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

# QuantFactory/MN-Violet-Lotus-12B-GGUF

|

| 33 |

+

This is quantized version of [FallenMerick/MN-Violet-Lotus-12B](https://huggingface.co/FallenMerick/MN-Violet-Lotus-12B) created using llama.cpp

|

| 34 |

+

|

| 35 |

+

# Original Model Card

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

# MN-Violet-Lotus-12B

|

| 41 |

+

|

| 42 |

+

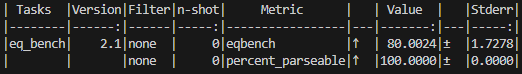

This is the model I was trying to create when [Chunky-Lotus](https://huggingface.co/FallenMerick/MN-Chunky-Lotus-12B) emerged. Not only does this model score higher on my local EQ benchmarks (80.00 w/ 100% parsed @ 8-bit), but it does an even better job at roleplaying and producing creative outputs while still adhering to wide ranges of character personalities. The high levels of emotional intelligence are really quite noticeable as well.

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

Once again, models tend to score higher on my local tests when compared to their posted scores, but this has become the new high score for models I've personally tested.

|

| 47 |

+

|

| 48 |

+

I really like the way this model writes, and I hope you'll enjoy using it as well!

|

| 49 |

+

|

| 50 |

+

GGUF Quants:

|

| 51 |

+

* https://huggingface.co/backyardai/MN-Violet-Lotus-12B-GGUF

|

| 52 |

+

* https://huggingface.co/mradermacher/MN-Violet-Lotus-12B-GGUF

|

| 53 |

+

* https://huggingface.co/mradermacher/MN-Violet-Lotus-12B-i1-GGUF

|

| 54 |

+

|

| 55 |

+

## Recommended ST Settings

|

| 56 |

+

|

| 57 |

+

Special thanks to [@Zeldazachman](https://huggingface.co/Zeldazackman) for these amazing ST settings that I now wholeheartedly recommend!

|

| 58 |

+

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

## Merge Details

|

| 62 |

+

|

| 63 |

+

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

|

| 64 |

+

|

| 65 |

+

### Merge Method

|

| 66 |

+

|

| 67 |

+

This model was merged using the [Model Stock](https://arxiv.org/abs/2403.19522) merge method.

|

| 68 |

+

|

| 69 |

+

### Models Merged

|

| 70 |

+

|

| 71 |

+

The following models were included in the merge:

|

| 72 |

+

* [Epiculous/Violet_Twilight-v0.2](https://huggingface.co/Epiculous/Violet_Twilight-v0.2)

|

| 73 |

+

* [NeverSleep/Lumimaid-v0.2-12B](https://huggingface.co/NeverSleep/Lumimaid-v0.2-12B)

|

| 74 |

+

* [flammenai/Mahou-1.5-mistral-nemo-12B](https://huggingface.co/flammenai/Mahou-1.5-mistral-nemo-12B)

|

| 75 |

+

* [Sao10K/MN-12B-Lyra-v4](https://huggingface.co/Sao10K/MN-12B-Lyra-v4)

|

| 76 |

+

|

| 77 |

+

### Configuration

|

| 78 |

+

|

| 79 |

+

The following YAML configuration was used to produce this model:

|

| 80 |

+

|

| 81 |

+

```yaml

|

| 82 |

+

models:

|

| 83 |

+

- model: FallenMerick/MN-Twilight-Maid-SLERP-12B #(unreleased)

|

| 84 |

+

- model: Sao10K/MN-12B-Lyra-v4

|

| 85 |

+

- model: flammenai/Mahou-1.5-mistral-nemo-12B

|

| 86 |

+

merge_method: model_stock

|

| 87 |

+

base_model: mistralai/Mistral-Nemo-Instruct-2407

|

| 88 |

+

parameters:

|

| 89 |

+

normalize: true

|

| 90 |

+

dtype: bfloat16

|

| 91 |

+

```

|

| 92 |

+

|

| 93 |

+

In this recipe, Violet Twilight and Lumimaid were first blended using the SLERP method to create a strong roleplaying foundation. Lyra v4 is then added to the mix for its great creativity and roleplaying performance, along with Mahou to once again curtail the outputs and prevent the resulting model from becoming too wordy. Model Stock was used for the final merge in order to really push the resulting weights in the proper direction while using Nemo Instruct as a strong anchor point.

|