File size: 4,195 Bytes

f9edd10 003dfa6 f9edd10 cccec25 f9edd10 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 |

---

datasets:

- BatsResearch/ctga-v1

language:

- en

library_name: transformers

pipeline_tag: text2text-generation

tags:

- data generation

license: apache-2.0

---

# Bonito-v1 GGUF

You can find the original model at [BatsResearch/bonito-v1](https://huggingface.co/BatsResearch/bonito-v1)

## Variations

| Name | Quant method | Bits |

| ---- | ---- | ---- |

| [bonito-v1_iq4_nl.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_iq4_nl.gguf) | IQ4_NL | 4 | 4.16 GB|

| [bonito-v1_q4_k_m.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_q4_k_m.gguf) | Q4_K_M | 4 | 4.37 GB|

| [bonito-v1_q5_k_2.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_q5_k_s.gguf) | Q5_K_S | 5 | 5.00 GB|

| [bonito-v1_q5_k_m.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_q5_k_m.gguf) | Q5_K_M | 5 | 5.13 GB|

| [bonito-v1_q6_k.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_q6_k.gguf) | Q6_K | 6 | 5.94 GB|

| [bonito-v1_q8_0.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_q8_0.gguf) | Q8_0 | 8 | 7.70 GB|

| [bonito-v1_f16.gguf](https://huggingface.co/alexandreteles/bonito-v1-gguf/blob/main/bonito-v1_f16.gguf) | FP16 | 16 | 14.5 GB|

## Model Card for bonito

<!-- Provide a quick summary of what the model is/does. -->

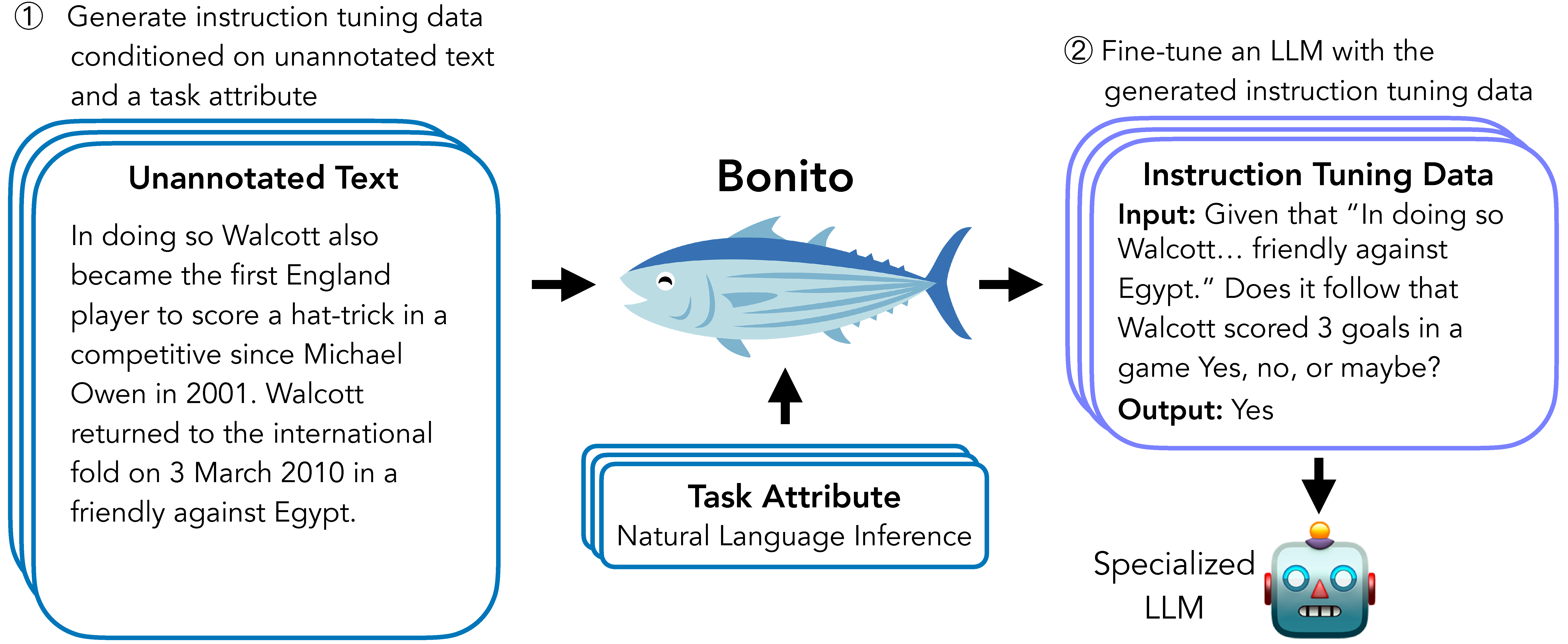

Bonito is an open-source model for conditional task generation: the task of converting unannotated text into task-specific training datasets for instruction tuning.

## Model Details

### Model Description

<!-- Provide a longer summary of what this model is. -->

Bonito can be used to create synthetic instruction tuning datasets to adapt large language models on users' specialized, private data.

In our [paper](https://github.com/BatsResearch/bonito), we show that Bonito can be used to adapt both pretrained and instruction tuned models to tasks without any annotations.

- **Developed by:** Nihal V. Nayak, Yiyang Nan, Avi Trost, and Stephen H. Bach

- **Model type:** MistralForCausalLM

- **Language(s) (NLP):** English

- **License:** TBD

- **Finetuned from model:** `mistralai/Mistral-7B-v0.1`

### Model Sources

<!-- Provide the basic links for the model. -->

- **Repository:** [https://github.com/BatsResearch/bonito](https://github.com/BatsResearch/bonito)

- **Paper:** Arxiv link

## Uses

<!-- Address questions around how the model is intended to be used, including the foreseeable users of the model and those affected by the model. -->

### Direct Use

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

To easily generate synthetic instruction tuning datasets, we recommend using the [bonito](https://github.com/BatsResearch/bonito) package built using the `transformers` and the `vllm` libraries.

```python

from bonito import Bonito, SamplingParams

from datasets import load_dataset

# Initialize the Bonito model

bonito = Bonito()

# load dataaset with unannotated text

unannotated_text = load_dataset(

"BatsResearch/bonito-experiment",

"unannotated_contract_nli"

)["train"].select(range(10))

# Generate synthetic instruction tuning dataset

sampling_params = SamplingParams(max_tokens=256, top_p=0.95, temperature=0.5, n=1)

synthetic_dataset = bonito.generate_tasks(

unannotated_text,

context_col="input",

task_type="nli",

sampling_params=sampling_params

)

```

### Out-of-Scope Use

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

Our model is trained to generate the following task types: summarization, sentiment analysis, multiple-choice question answering, extractive question answering, topic classification, natural language inference, question generation, text generation, question answering without choices, paraphrase identification, sentence completion, yes-no question answering, word sense disambiguation, paraphrase generation, textual entailment, and

coreference resolution.

The model might not produce accurate synthetic tasks beyond these task types. |