File size: 1,171 Bytes

9822e6b 7f4b284 9822e6b |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 |

---

base_model:

- meta-llama/Meta-Llama-3-8B

library_name: transformers

tags:

- mergekit

- merge

- llama3

license: llama3

language:

- en

---

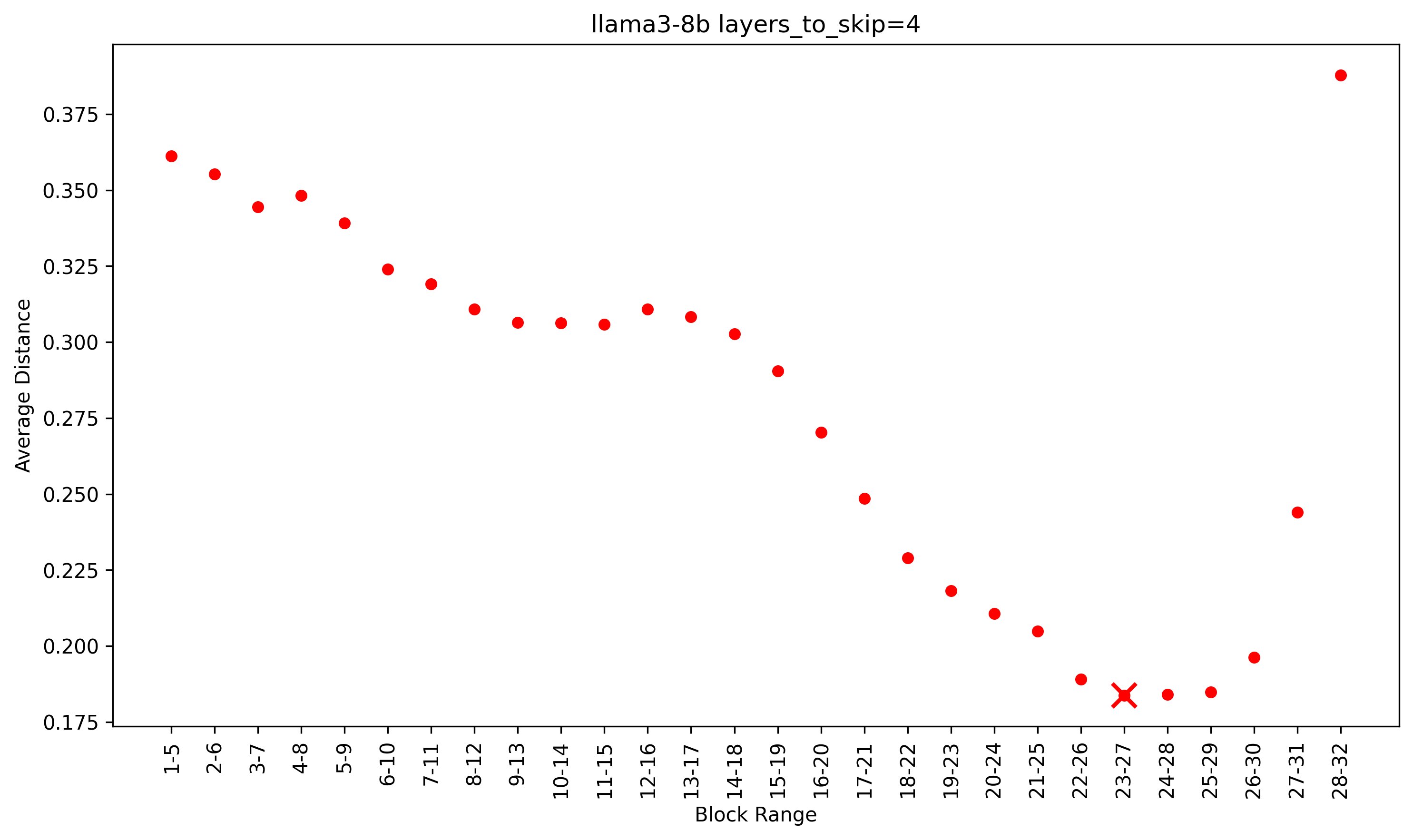

Meta's Llama 3 8B pruned to 7B parameters(w/ 28 layers). Layers to prune selected using PruneMe repo on Github.

- layers_to_skip = 4

- Layer 23 to 27 has the minimum average distance of 0.18376044921875

- [ ] To Do : Post pruning training.

# model

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the passthrough merge method.

### Models Merged

The following models were included in the merge:

* [meta-llama/Meta-Llama-3-8B](https://huggingface.co/meta-llama/Meta-Llama-3-8B)

### Configuration

The following YAML configuration was used to produce this model:

```yaml

slices:

- sources:

- model: meta-llama/Meta-Llama-3-8B

layer_range: [0, 23]

- sources:

- model: meta-llama/Meta-Llama-3-8B

layer_range: [27,32]

merge_method: passthrough

dtype: bfloat16

``` |