LucidFusion: Generating 3D Gaussians with Arbitrary Unposed Images

Hao He* Yixun Liang*, Luozhou Wang, Yuanhao Cai, Xinli Xu, Hao-Xiang Guo, Xiang Wen, Yingcong Chen**

*: Equal contribution. **: Corresponding author.

Paper PDF | Project Page | [Gradio Demo](Coming Soon)

Demo results of our latest model

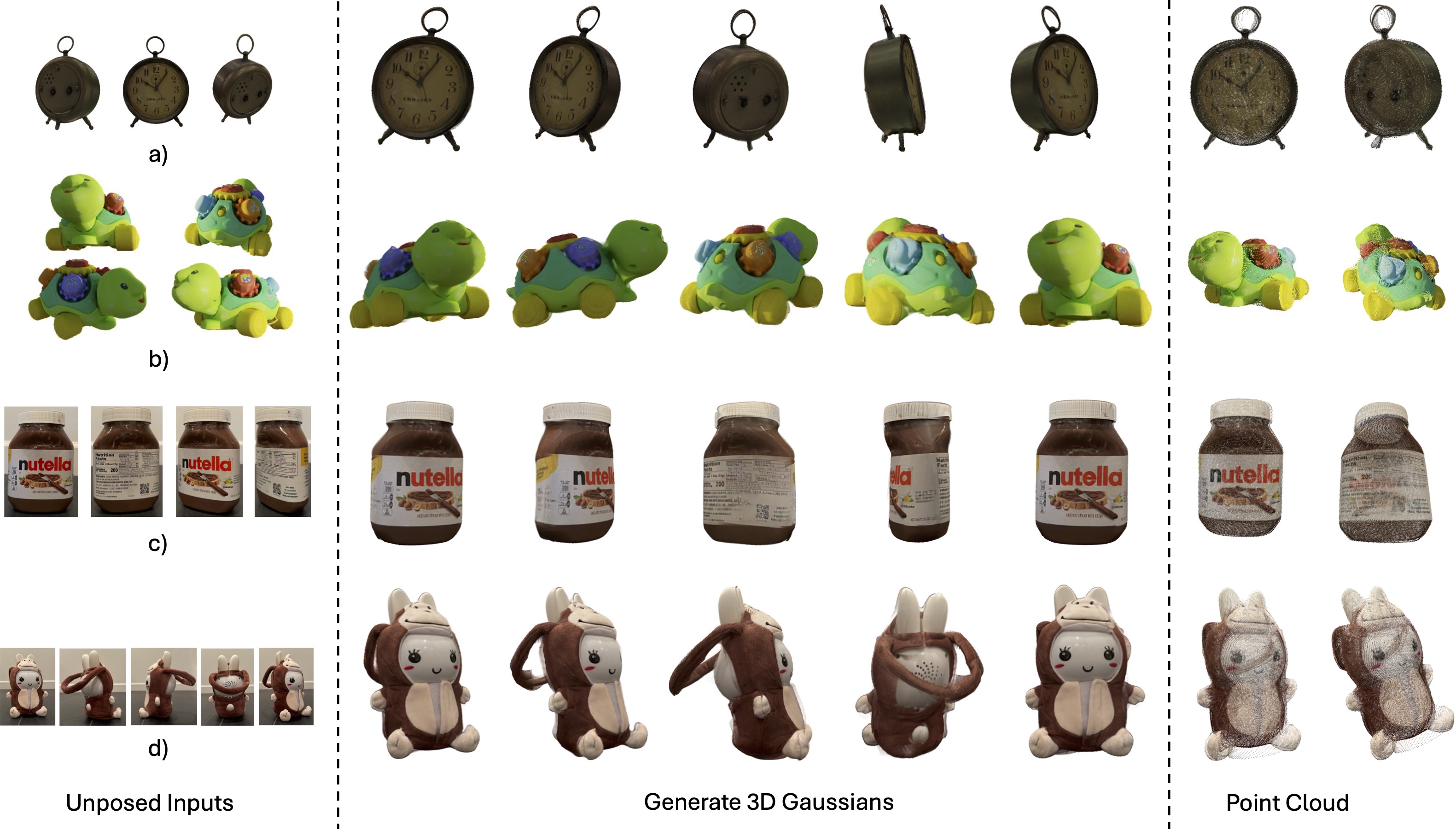

We present a flexible end-to-end feed-forward framework, named the LucidFusion, to generate high-resolution 3D Gaussians from unposed, sparse, and arbitrary numbers of multiview images.

Examples of cross-dataset content creations with our framework, the LucidFusion, around ~13FPS on A800.

🎏 Abstract

We present a flexible end-to-end feed-forward framework, named the LucidFusion, to generate high-resolution 3D Gaussians from unposed, sparse, and arbitrary numbers of multiview images.

CLICK for the full abstract

Recent large reconstruction models have made notable progress in generating high-quality 3D objects from single images. However, these methods often struggle with controllability, as they lack information from multiple views, leading to incomplete or inconsistent 3D reconstructions. To address this limitation, we introduce LucidFusion, a flexible end-to-end feed-forward framework that leverages the Relative Coordinate Map (RCM). Unlike traditional methods linking images to 3D world thorough pose, LucidFusion utilizes RCM to align geometric features coherently across different views, making it highly adaptable for 3D generation from arbitrary, unposed images. Furthermore, LucidFusion seamlessly integrates with the original single-image-to-3D pipeline, producing detailed 3D Gaussians at a resolution of $512 \times 512$, making it well-suited for a wide range of applications.

🔧 Training Instructions

Our inference code is now released!

Please refer to our repo for more details.

Pretrained Weights

Our current model loads pre-trained diffusion model for config. We use stable-diffusion-2-1-base, to download it, simply run

python pretrained/download.py

You can omit this step if you already have stable-diffusion-2-1-base, and simply update "model_key" with your local SD-2-1 path for scripts in scripts/ folder.

Our pre-trained weights is released!

🚧 Todo

- Release the inference codes

- Release our weights

- Release the Gardio Demo

- Release the Stage 1 and 2 training codes

📍 Citation

If you find our work useful, please consider citing our paper.

@misc{he2024lucidfusion,

title={LucidFusion: Generating 3D Gaussians with Arbitrary Unposed Images},

author={Hao He and Yixun Liang and Luozhou Wang and Yuanhao Cai and Xinli Xu and Hao-Xiang Guo and Xiang Wen and Yingcong Chen},

year={2024},

eprint={2410.15636},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2410.15636},

}

💼 Acknowledgement

This work is built on many amazing research works and open-source projects:

Thanks for their excellent work and great contribution to 3D generation area.

- Downloads last month

- 12