Update README.md

Browse files

README.md

CHANGED

|

@@ -13,7 +13,7 @@

|

|

| 13 |

</sup>

|

| 14 |

<div> </div>

|

| 15 |

</div>

|

| 16 |

-

|

| 17 |

[](https://github.com/internLM/OpenCompass/)

|

| 18 |

|

| 19 |

[🤔Reporting Issues](https://github.com/InternLM/InternLM/issues/new)

|

|

@@ -23,9 +23,8 @@

|

|

| 23 |

|

| 24 |

## Introduction

|

| 25 |

|

| 26 |

-

InternLM has open-sourced a 7 billion parameter base model

|

| 27 |

- It leverages trillions of high-quality tokens for training to establish a powerful knowledge base.

|

| 28 |

-

- It supports an 8k context window length, enabling longer input sequences and stronger reasoning capabilities.

|

| 29 |

- It provides a versatile toolset for users to flexibly build their own workflows.

|

| 30 |

|

| 31 |

## InternLM-7B

|

|

@@ -57,34 +56,18 @@ We conducted a comprehensive evaluation of InternLM using the open-source evalua

|

|

| 57 |

To load the InternLM 7B Chat model using Transformers, use the following code:

|

| 58 |

```python

|

| 59 |

>>> from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 60 |

-

>>> tokenizer = AutoTokenizer.from_pretrained("internlm/internlm-

|

| 61 |

-

>>> model = AutoModelForCausalLM.from_pretrained("internlm/internlm-

|

| 62 |

>>> model = model.eval()

|

| 63 |

-

>>>

|

| 64 |

-

>>>

|

| 65 |

-

|

| 66 |

-

>>>

|

| 67 |

-

>>>

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

|

| 71 |

-

2. Use a calendar or planner: Write down deadlines and appointments in a calendar or planner so you don't forget them. This will also help you schedule your time more effectively and avoid overbooking yourself.

|

| 72 |

-

3. Minimize distractions: Try to eliminate any potential distractions when working on important tasks. Turn off notifications on your phone, close unnecessary tabs on your computer, and find a quiet place to work if possible.

|

| 73 |

-

|

| 74 |

-

Remember, good time management skills take practice and patience. Start with small steps and gradually incorporate these habits into your daily routine.

|

| 75 |

-

```

|

| 76 |

-

|

| 77 |

-

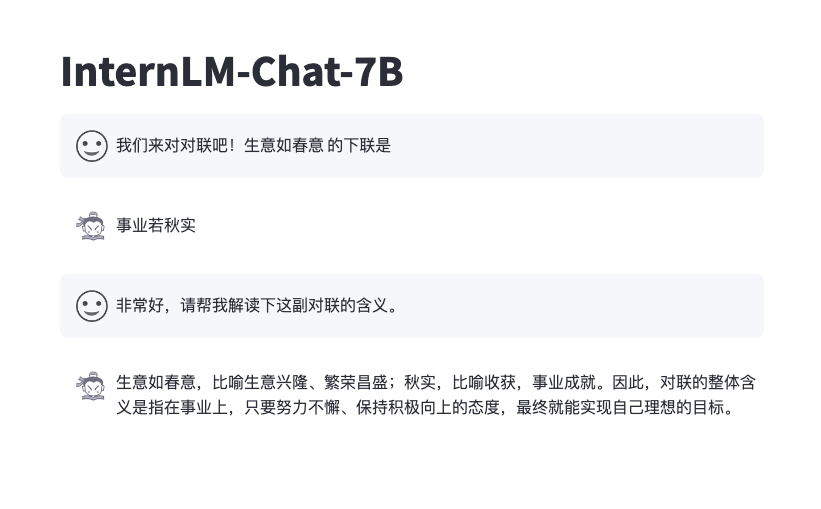

### Dialogue

|

| 78 |

-

|

| 79 |

-

You can interact with the InternLM Chat 7B model through a frontend interface by running the following code:

|

| 80 |

-

```bash

|

| 81 |

-

pip install streamlit==1.24.0

|

| 82 |

-

pip install transformers==4.30.2

|

| 83 |

-

streamlit run web_demo.py

|

| 84 |

```

|

| 85 |

-

The effect is as follows

|

| 86 |

-

|

| 87 |

-

|

| 88 |

|

| 89 |

## Open Source License

|

| 90 |

|

|

@@ -92,9 +75,8 @@ The InternLM weights are fully open for academic research and also allow commerc

|

|

| 92 |

|

| 93 |

|

| 94 |

## 简介

|

| 95 |

-

InternLM ,即书生·浦语大模型,包含面向实用场景的70

|

| 96 |

- 使用上万亿高质量预料,建立模型超强知识体系;

|

| 97 |

-

- 支持8k语境窗口长度,实现更长输入与更强推理体验;

|

| 98 |

- 通用工具调用能力,支持用户灵活自助搭建流程;

|

| 99 |

|

| 100 |

## InternLM-7B

|

|

@@ -125,30 +107,18 @@ InternLM ,即书生·浦语大模型,包含面向实用场景的70亿参数

|

|

| 125 |

通过以下的代码加载 InternLM 7B Chat 模型

|

| 126 |

```python

|

| 127 |

>>> from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 128 |

-

>>> tokenizer = AutoTokenizer.from_pretrained("internlm/internlm-

|

| 129 |

-

>>> model = AutoModelForCausalLM.from_pretrained("internlm/internlm-

|

| 130 |

>>> model = model.eval()

|

| 131 |

-

>>>

|

| 132 |

-

>>>

|

| 133 |

-

|

| 134 |

-

>>>

|

| 135 |

-

>>>

|

| 136 |

-

|

| 137 |

-

|

| 138 |

-

|

| 139 |

-

3. 集中注意力:避免分心,集中注意力完成任务。关闭社交媒体和电子邮件通知,专注于任务,这将帮助您更快地完成任务,并减少错误的可能性。

|

| 140 |

-

```

|

| 141 |

-

|

| 142 |

-

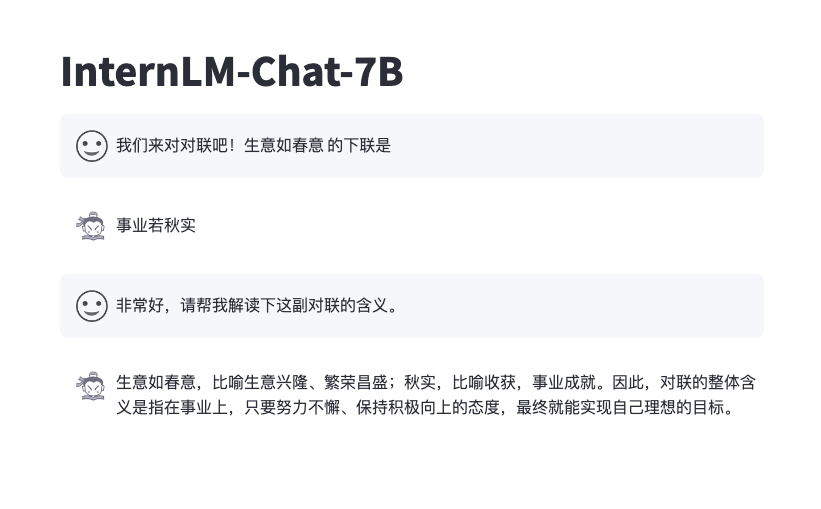

### 通过前端网页对话

|

| 143 |

-

可以通过以下代码启动一个前端的界面来与 InternLM Chat 7B 模型进行交互

|

| 144 |

-

```bash

|

| 145 |

-

pip install streamlit==1.24.0

|

| 146 |

-

pip install transformers==4.30.2

|

| 147 |

-

streamlit run web_demo.py

|

| 148 |

```

|

| 149 |

-

效果如下

|

| 150 |

-

|

| 151 |

-

|

| 152 |

|

| 153 |

## 开源许可证

|

| 154 |

|

|

|

|

| 13 |

</sup>

|

| 14 |

<div> </div>

|

| 15 |

</div>

|

| 16 |

+

|

| 17 |

[](https://github.com/internLM/OpenCompass/)

|

| 18 |

|

| 19 |

[🤔Reporting Issues](https://github.com/InternLM/InternLM/issues/new)

|

|

|

|

| 23 |

|

| 24 |

## Introduction

|

| 25 |

|

| 26 |

+

InternLM has open-sourced a 7 billion parameter base model tailored for practical scenarios. The model has the following characteristics:

|

| 27 |

- It leverages trillions of high-quality tokens for training to establish a powerful knowledge base.

|

|

|

|

| 28 |

- It provides a versatile toolset for users to flexibly build their own workflows.

|

| 29 |

|

| 30 |

## InternLM-7B

|

|

|

|

| 56 |

To load the InternLM 7B Chat model using Transformers, use the following code:

|

| 57 |

```python

|

| 58 |

>>> from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 59 |

+

>>> tokenizer = AutoTokenizer.from_pretrained("internlm/internlm-7b", trust_remote_code=True)

|

| 60 |

+

>>> model = AutoModelForCausalLM.from_pretrained("internlm/internlm-7b", trust_remote_code=True).cuda()

|

| 61 |

>>> model = model.eval()

|

| 62 |

+

>>> inputs = tokenizer(["A beautiful flower"], return_tensors="pt")

|

| 63 |

+

>>> for k,v in inputs.items():

|

| 64 |

+

inputs[k] = v.cuda()

|

| 65 |

+

>>> gen_kwargs = {"max_length": 128, "top_p": 0.8, "temperature": 0.8, "do_sample": True, "repetition_penalty": 1.1}

|

| 66 |

+

>>> output = model.generate(**inputs, **gen_kwargs)

|

| 67 |

+

>>> print(output)

|

| 68 |

+

<s> A beautiful flower box made of white rose wood. It is a perfect gift for weddings, birthdays and anniversaries.

|

| 69 |

+

All the roses are from our farm Roses Flanders. Therefor you know that these flowers last much longer than those in store or online!</s>

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 70 |

```

|

|

|

|

|

|

|

|

|

|

| 71 |

|

| 72 |

## Open Source License

|

| 73 |

|

|

|

|

| 75 |

|

| 76 |

|

| 77 |

## 简介

|

| 78 |

+

InternLM ,即书生·浦语大模型,包含面向实用场景的70亿参数基础模型 (InternLM-7B)。模型具有以下特点:

|

| 79 |

- 使用上万亿高质量预料,建立模型超强知识体系;

|

|

|

|

| 80 |

- 通用工具调用能力,支持用户灵活自助搭建流程;

|

| 81 |

|

| 82 |

## InternLM-7B

|

|

|

|

| 107 |

通过以下的代码加载 InternLM 7B Chat 模型

|

| 108 |

```python

|

| 109 |

>>> from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 110 |

+

>>> tokenizer = AutoTokenizer.from_pretrained("internlm/internlm-7b", trust_remote_code=True)

|

| 111 |

+

>>> model = AutoModelForCausalLM.from_pretrained("internlm/internlm-7b", trust_remote_code=True).cuda()

|

| 112 |

>>> model = model.eval()

|

| 113 |

+

>>> inputs = tokenizer(["来到美丽的大自然,我们发现"], return_tensors="pt")

|

| 114 |

+

>>> for k,v in inputs.items():

|

| 115 |

+

inputs[k] = v.cuda()

|

| 116 |

+

>>> gen_kwargs = {"max_length": 128, "top_p": 0.8, "temperature": 0.8, "do_sample": True, "repetition_penalty": 1.1}

|

| 117 |

+

>>> output = model.generate(**inputs, **gen_kwargs)

|

| 118 |

+

>>> print(output)

|

| 119 |

+

来到美丽的大自然,我们发现各种各样的花千奇百怪。有的颜色鲜艳亮丽,使人感觉生机勃勃;有的是红色的花瓣儿粉嫩嫩的像少女害羞的脸庞一样让人爱不释手.有的小巧玲珑; 还有的花瓣粗大看似枯黄实则暗藏玄机!

|

| 120 |

+

不同的花卉有不同的“脾气”,它们都有着属于自己的故事和人生道理.这些鲜花都是大自然中最为原始的物种,每一朵都绽放出别样的美令人陶醉、着迷!

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 121 |

```

|

|

|

|

|

|

|

|

|

|

| 122 |

|

| 123 |

## 开源许可证

|

| 124 |

|