Added model card (#1)

Browse files- Added model card (929b4d52ebef7a4ca841117bb646ab0fbd2cd644)

Co-authored-by: Depu Meng <DepuMeng@users.noreply.huggingface.co>

README.md

ADDED

|

@@ -0,0 +1,93 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

tags:

|

| 4 |

+

- object-detection

|

| 5 |

+

- vision

|

| 6 |

+

datasets:

|

| 7 |

+

- coco

|

| 8 |

+

widget:

|

| 9 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

|

| 10 |

+

example_title: Savanna

|

| 11 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

|

| 12 |

+

example_title: Football Match

|

| 13 |

+

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

|

| 14 |

+

example_title: Airport

|

| 15 |

+

---

|

| 16 |

+

|

| 17 |

+

# Conditional DETR model with ResNet-50 backbone

|

| 18 |

+

|

| 19 |

+

Conditional DEtection TRansformer (DETR) model trained end-to-end on COCO 2017 object detection (118k annotated images). It was introduced in the paper [Conditional DETR for Fast Training Convergence](https://arxiv.org/abs/2108.06152) by Meng et al. and first released in [this repository](https://github.com/Atten4Vis/ConditionalDETR).

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

## Model description

|

| 23 |

+

|

| 24 |

+

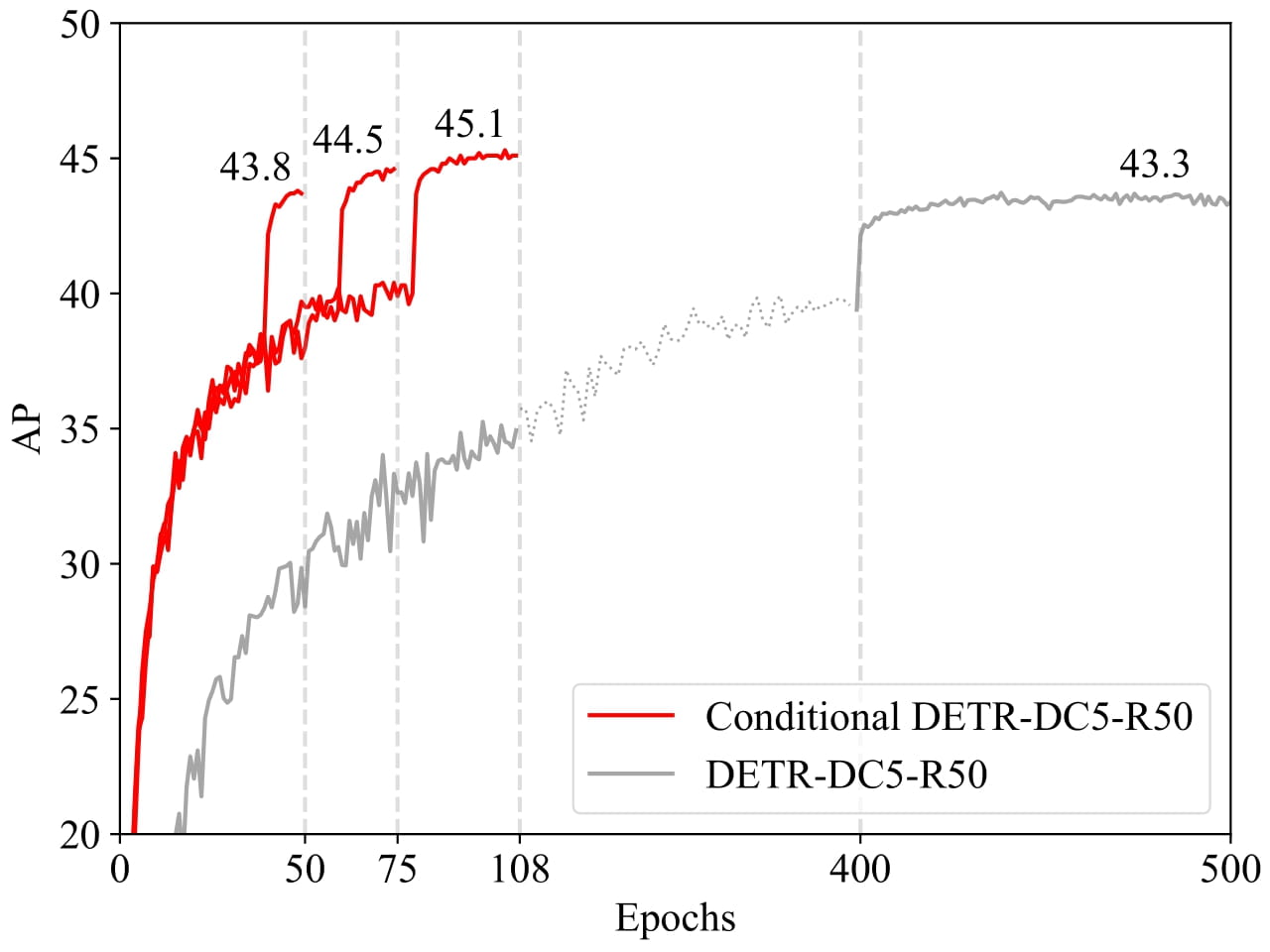

The recently-developed DETR approach applies the transformer encoder and decoder architecture to object detection and achieves promising performance. In this paper, we handle the critical issue, slow training convergence, and present a conditional cross-attention mechanism for fast DETR training. Our approach is motivated by that the cross-attention in DETR relies highly on the content embeddings for localizing the four extremities and predicting the box, which increases the need for high-quality content embeddings and thus the training difficulty. Our approach, named conditional DETR, learns a conditional spatial query from the decoder embedding for decoder multi-head cross-attention. The benefit is that through the conditional spatial query, each cross-attention head is able to attend to a band containing a distinct region, e.g., one object extremity or a region inside the object box. This narrows down the spatial range for localizing the distinct regions for object classification and box regression, thus relaxing the dependence on the content embeddings and easing the training. Empirical results show that conditional DETR converges 6.7× faster for the backbones R50 and R101 and 10× faster for stronger backbones DC5-R50 and DC5-R101.

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

## Intended uses & limitations

|

| 29 |

+

|

| 30 |

+

You can use the raw model for object detection. See the [model hub](https://huggingface.co/models?search=microsoft/conditional-detr) to look for all available Conditional DETR models.

|

| 31 |

+

|

| 32 |

+

### How to use

|

| 33 |

+

|

| 34 |

+

Here is how to use this model:

|

| 35 |

+

|

| 36 |

+

```python

|

| 37 |

+

from transformers import AutoFeatureExtractor, ConditionalDetrForObjectDetection

|

| 38 |

+

import torch

|

| 39 |

+

from PIL import Image

|

| 40 |

+

import requests

|

| 41 |

+

|

| 42 |

+

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

|

| 43 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 44 |

+

|

| 45 |

+

feature_extractor = AutoFeatureExtractor.from_pretrained("microsoft/conditional-detr-resnet-50")

|

| 46 |

+

model = ConditionalDetrForObjectDetection.from_pretrained("microsoft/conditional-detr-resnet-50")

|

| 47 |

+

|

| 48 |

+

inputs = feature_extractor(images=image, return_tensors="pt")

|

| 49 |

+

outputs = model(**inputs)

|

| 50 |

+

|

| 51 |

+

# convert outputs (bounding boxes and class logits) to COCO API

|

| 52 |

+

target_sizes = torch.tensor([image.size[::-1]])

|

| 53 |

+

results = feature_extractor.post_process(outputs, target_sizes=target_sizes)[0]

|

| 54 |

+

|

| 55 |

+

for score, label, box in zip(results["scores"], results["labels"], results["boxes"]):

|

| 56 |

+

box = [round(i, 2) for i in box.tolist()]

|

| 57 |

+

# let's only keep detections with score > 0.7

|

| 58 |

+

if score > 0.7:

|

| 59 |

+

print(

|

| 60 |

+

f"Detected {model.config.id2label[label.item()]} with confidence "

|

| 61 |

+

f"{round(score.item(), 3)} at location {box}"

|

| 62 |

+

)

|

| 63 |

+

```

|

| 64 |

+

This should output:

|

| 65 |

+

```

|

| 66 |

+

Detected remote with confidence 0.833 at location [38.31, 72.1, 177.63, 118.45]

|

| 67 |

+

Detected cat with confidence 0.831 at location [9.2, 51.38, 321.13, 469.0]

|

| 68 |

+

Detected cat with confidence 0.804 at location [340.3, 16.85, 642.93, 370.95]

|

| 69 |

+

```

|

| 70 |

+

|

| 71 |

+

Currently, both the feature extractor and model support PyTorch.

|

| 72 |

+

|

| 73 |

+

## Training data

|

| 74 |

+

|

| 75 |

+

The Conditional DETR model was trained on [COCO 2017 object detection](https://cocodataset.org/#download), a dataset consisting of 118k/5k annotated images for training/validation respectively.

|

| 76 |

+

|

| 77 |

+

### BibTeX entry and citation info

|

| 78 |

+

|

| 79 |

+

```bibtex

|

| 80 |

+

@inproceedings{MengCFZLYS021,

|

| 81 |

+

author = {Depu Meng and

|

| 82 |

+

Xiaokang Chen and

|

| 83 |

+

Zejia Fan and

|

| 84 |

+

Gang Zeng and

|

| 85 |

+

Houqiang Li and

|

| 86 |

+

Yuhui Yuan and

|

| 87 |

+

Lei Sun and

|

| 88 |

+

Jingdong Wang},

|

| 89 |

+

title = {Conditional {DETR} for Fast Training Convergence},

|

| 90 |

+

booktitle = {2021 {IEEE/CVF} International Conference on Computer Vision, {ICCV}

|

| 91 |

+

2021, Montreal, QC, Canada, October 10-17, 2021},

|

| 92 |

+

}

|

| 93 |

+

```

|