Upload folder using huggingface_hub

Browse files- README.md +235 -0

- added_tokens.json +5 -0

- all_results.json +21 -0

- cal_data.safetensors +3 -0

- config.json +26 -0

- eval_results.json +16 -0

- generation_config.json +6 -0

- input_states.safetensors +3 -0

- job.json +0 -0

- measurement.json +0 -0

- model.safetensors.index.json +298 -0

- output.safetensors +3 -0

- special_tokens_map.json +11 -0

- tokenizer.json +0 -0

- tokenizer.model +3 -0

- tokenizer_config.json +46 -0

- train_results.json +8 -0

- trainer_state.json +0 -0

- training_args.bin +3 -0

README.md

ADDED

|

@@ -0,0 +1,235 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- generated_from_trainer

|

| 4 |

+

model-index:

|

| 5 |

+

- name: zephyr-7b-beta

|

| 6 |

+

results: []

|

| 7 |

+

license: mit

|

| 8 |

+

datasets:

|

| 9 |

+

- HuggingFaceH4/ultrachat_200k

|

| 10 |

+

- HuggingFaceH4/ultrafeedback_binarized

|

| 11 |

+

language:

|

| 12 |

+

- en

|

| 13 |

+

base_model: mistralai/Mistral-7B-v0.1

|

| 14 |

+

---

|

| 15 |

+

|

| 16 |

+

<!-- This model card has been generated automatically according to the information the Trainer had access to. You

|

| 17 |

+

should probably proofread and complete it, then remove this comment. -->

|

| 18 |

+

|

| 19 |

+

<img src="https://huggingface.co/HuggingFaceH4/zephyr-7b-alpha/resolve/main/thumbnail.png" alt="Zephyr Logo" width="800" style="margin-left:'auto' margin-right:'auto' display:'block'"/>

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

# Model Card for Zephyr 7B β

|

| 23 |

+

|

| 24 |

+

Zephyr is a series of language models that are trained to act as helpful assistants. Zephyr-7B-β is the second model in the series, and is a fine-tuned version of [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) that was trained on on a mix of publicly available, synthetic datasets using [Direct Preference Optimization (DPO)](https://arxiv.org/abs/2305.18290). We found that removing the in-built alignment of these datasets boosted performance on [MT Bench](https://huggingface.co/spaces/lmsys/mt-bench) and made the model more helpful. However, this means that model is likely to generate problematic text when prompted to do so and should only be used for educational and research purposes. You can find more details in the [technical report](https://arxiv.org/abs/2310.16944).

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

## Model description

|

| 28 |

+

|

| 29 |

+

- **Model type:** A 7B parameter GPT-like model fine-tuned on a mix of publicly available, synthetic datasets.

|

| 30 |

+

- **Language(s) (NLP):** Primarily English

|

| 31 |

+

- **License:** MIT

|

| 32 |

+

- **Finetuned from model:** [mistralai/Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1)

|

| 33 |

+

|

| 34 |

+

### Model Sources

|

| 35 |

+

|

| 36 |

+

<!-- Provide the basic links for the model. -->

|

| 37 |

+

|

| 38 |

+

- **Repository:** https://github.com/huggingface/alignment-handbook

|

| 39 |

+

- **Demo:** https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat

|

| 40 |

+

- **Chatbot Arena:** Evaluate Zephyr 7B against 10+ LLMs in the LMSYS arena: http://arena.lmsys.org

|

| 41 |

+

|

| 42 |

+

## Performance

|

| 43 |

+

|

| 44 |

+

At the time of release, Zephyr-7B-β is the highest ranked 7B chat model on the [MT-Bench](https://huggingface.co/spaces/lmsys/mt-bench) and [AlpacaEval](https://tatsu-lab.github.io/alpaca_eval/) benchmarks:

|

| 45 |

+

|

| 46 |

+

| Model | Size | Alignment | MT-Bench (score) | AlpacaEval (win rate %) |

|

| 47 |

+

|-------------|-----|----|---------------|--------------|

|

| 48 |

+

| StableLM-Tuned-α | 7B| dSFT |2.75| -|

|

| 49 |

+

| MPT-Chat | 7B |dSFT |5.42| -|

|

| 50 |

+

| Xwin-LMv0.1 | 7B| dPPO| 6.19| 87.83|

|

| 51 |

+

| Mistral-Instructv0.1 | 7B| - | 6.84 |-|

|

| 52 |

+

| Zephyr-7b-α |7B| dDPO| 6.88| -|

|

| 53 |

+

| **Zephyr-7b-β** 🪁 | **7B** | **dDPO** | **7.34** | **90.60** |

|

| 54 |

+

| Falcon-Instruct | 40B |dSFT |5.17 |45.71|

|

| 55 |

+

| Guanaco | 65B | SFT |6.41| 71.80|

|

| 56 |

+

| Llama2-Chat | 70B |RLHF |6.86| 92.66|

|

| 57 |

+

| Vicuna v1.3 | 33B |dSFT |7.12 |88.99|

|

| 58 |

+

| WizardLM v1.0 | 70B |dSFT |7.71 |-|

|

| 59 |

+

| Xwin-LM v0.1 | 70B |dPPO |- |95.57|

|

| 60 |

+

| GPT-3.5-turbo | - |RLHF |7.94 |89.37|

|

| 61 |

+

| Claude 2 | - |RLHF |8.06| 91.36|

|

| 62 |

+

| GPT-4 | -| RLHF |8.99| 95.28|

|

| 63 |

+

|

| 64 |

+

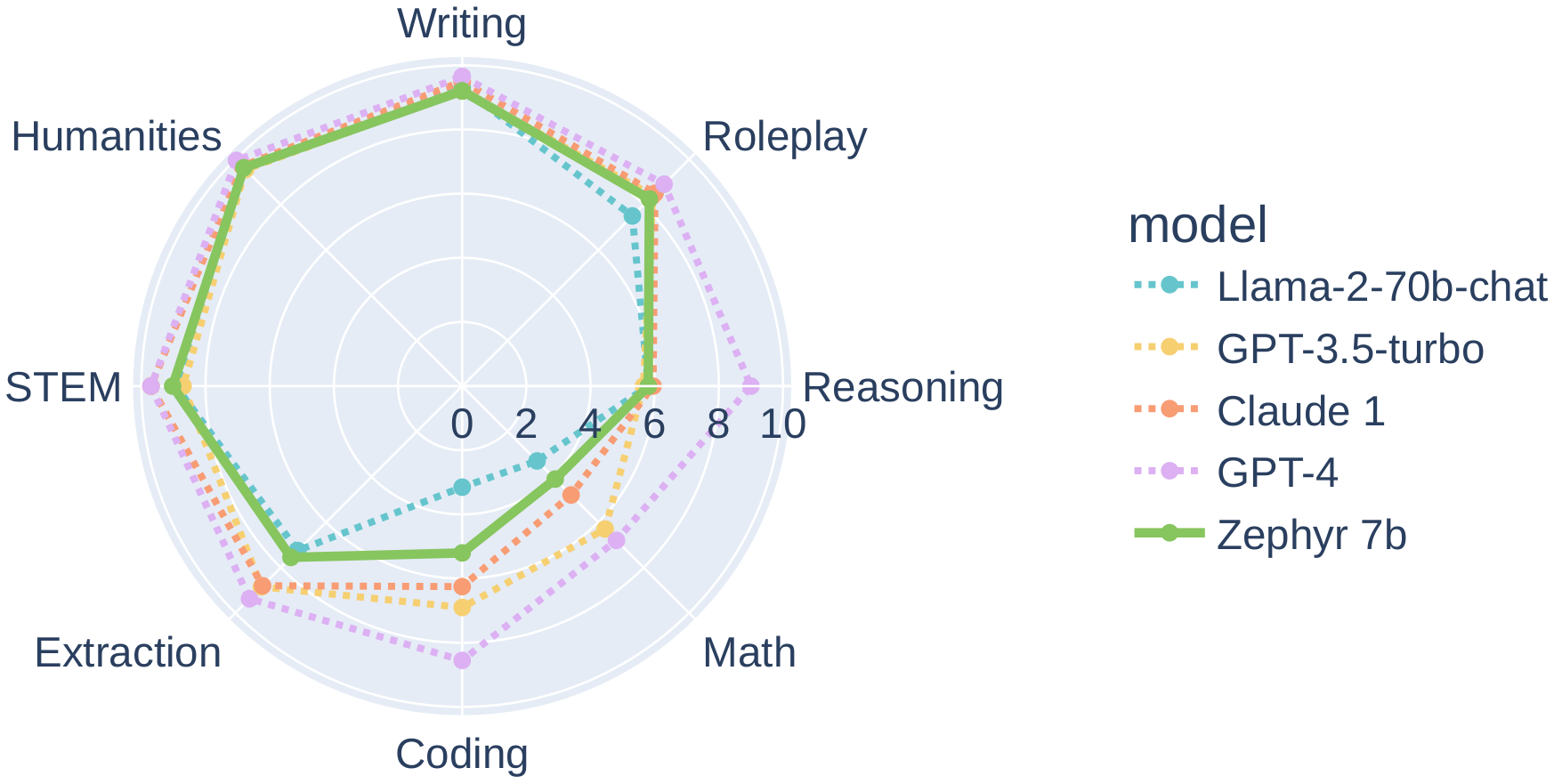

In particular, on several categories of MT-Bench, Zephyr-7B-β has strong performance compared to larger open models like Llama2-Chat-70B:

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

|

| 68 |

+

However, on more complex tasks like coding and mathematics, Zephyr-7B-β lags behind proprietary models and more research is needed to close the gap.

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

## Intended uses & limitations

|

| 72 |

+

|

| 73 |

+

The model was initially fine-tuned on a filtered and preprocessed of the [`UltraChat`](https://huggingface.co/datasets/stingning/ultrachat) dataset, which contains a diverse range of synthetic dialogues generated by ChatGPT.

|

| 74 |

+

We then further aligned the model with [🤗 TRL's](https://github.com/huggingface/trl) `DPOTrainer` on the [openbmb/UltraFeedback](https://huggingface.co/datasets/openbmb/UltraFeedback) dataset, which contains 64k prompts and model completions that are ranked by GPT-4. As a result, the model can be used for chat and you can check out our [demo](https://huggingface.co/spaces/HuggingFaceH4/zephyr-chat) to test its capabilities.

|

| 75 |

+

|

| 76 |

+

You can find the datasets used for training Zephyr-7B-β [here](https://huggingface.co/collections/HuggingFaceH4/zephyr-7b-6538c6d6d5ddd1cbb1744a66)

|

| 77 |

+

|

| 78 |

+

Here's how you can run the model using the `pipeline()` function from 🤗 Transformers:

|

| 79 |

+

|

| 80 |

+

```python

|

| 81 |

+

# Install transformers from source - only needed for versions <= v4.34

|

| 82 |

+

# pip install git+https://github.com/huggingface/transformers.git

|

| 83 |

+

# pip install accelerate

|

| 84 |

+

|

| 85 |

+

import torch

|

| 86 |

+

from transformers import pipeline

|

| 87 |

+

|

| 88 |

+

pipe = pipeline("text-generation", model="HuggingFaceH4/zephyr-7b-beta", torch_dtype=torch.bfloat16, device_map="auto")

|

| 89 |

+

|

| 90 |

+

# We use the tokenizer's chat template to format each message - see https://huggingface.co/docs/transformers/main/en/chat_templating

|

| 91 |

+

messages = [

|

| 92 |

+

{

|

| 93 |

+

"role": "system",

|

| 94 |

+

"content": "You are a friendly chatbot who always responds in the style of a pirate",

|

| 95 |

+

},

|

| 96 |

+

{"role": "user", "content": "How many helicopters can a human eat in one sitting?"},

|

| 97 |

+

]

|

| 98 |

+

prompt = pipe.tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

|

| 99 |

+

outputs = pipe(prompt, max_new_tokens=256, do_sample=True, temperature=0.7, top_k=50, top_p=0.95)

|

| 100 |

+

print(outputs[0]["generated_text"])

|

| 101 |

+

# <|system|>

|

| 102 |

+

# You are a friendly chatbot who always responds in the style of a pirate.</s>

|

| 103 |

+

# <|user|>

|

| 104 |

+

# How many helicopters can a human eat in one sitting?</s>

|

| 105 |

+

# <|assistant|>

|

| 106 |

+

# Ah, me hearty matey! But yer question be a puzzler! A human cannot eat a helicopter in one sitting, as helicopters are not edible. They be made of metal, plastic, and other materials, not food!

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

## Bias, Risks, and Limitations

|

| 110 |

+

|

| 111 |

+

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

|

| 112 |

+

|

| 113 |

+

Zephyr-7B-β has not been aligned to human preferences with techniques like RLHF or deployed with in-the-loop filtering of responses like ChatGPT, so the model can produce problematic outputs (especially when prompted to do so).

|

| 114 |

+

It is also unknown what the size and composition of the corpus was used to train the base model (`mistralai/Mistral-7B-v0.1`), however it is likely to have included a mix of Web data and technical sources like books and code. See the [Falcon 180B model card](https://huggingface.co/tiiuae/falcon-180B#training-data) for an example of this.

|

| 115 |

+

|

| 116 |

+

|

| 117 |

+

## Training and evaluation data

|

| 118 |

+

|

| 119 |

+

During DPO training, this model achieves the following results on the evaluation set:

|

| 120 |

+

|

| 121 |

+

- Loss: 0.7496

|

| 122 |

+

- Rewards/chosen: -4.5221

|

| 123 |

+

- Rewards/rejected: -8.3184

|

| 124 |

+

- Rewards/accuracies: 0.7812

|

| 125 |

+

- Rewards/margins: 3.7963

|

| 126 |

+

- Logps/rejected: -340.1541

|

| 127 |

+

- Logps/chosen: -299.4561

|

| 128 |

+

- Logits/rejected: -2.3081

|

| 129 |

+

- Logits/chosen: -2.3531

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

### Training hyperparameters

|

| 133 |

+

|

| 134 |

+

The following hyperparameters were used during training:

|

| 135 |

+

- learning_rate: 5e-07

|

| 136 |

+

- train_batch_size: 2

|

| 137 |

+

- eval_batch_size: 4

|

| 138 |

+

- seed: 42

|

| 139 |

+

- distributed_type: multi-GPU

|

| 140 |

+

- num_devices: 16

|

| 141 |

+

- total_train_batch_size: 32

|

| 142 |

+

- total_eval_batch_size: 64

|

| 143 |

+

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

|

| 144 |

+

- lr_scheduler_type: linear

|

| 145 |

+

- lr_scheduler_warmup_ratio: 0.1

|

| 146 |

+

- num_epochs: 3.0

|

| 147 |

+

|

| 148 |

+

### Training results

|

| 149 |

+

|

| 150 |

+

The table below shows the full set of DPO training metrics:

|

| 151 |

+

|

| 152 |

+

|

| 153 |

+

| Training Loss | Epoch | Step | Validation Loss | Rewards/chosen | Rewards/rejected | Rewards/accuracies | Rewards/margins | Logps/rejected | Logps/chosen | Logits/rejected | Logits/chosen |

|

| 154 |

+

|:-------------:|:-----:|:----:|:---------------:|:--------------:|:----------------:|:------------------:|:---------------:|:--------------:|:------------:|:---------------:|:-------------:|

|

| 155 |

+

| 0.6284 | 0.05 | 100 | 0.6098 | 0.0425 | -0.1872 | 0.7344 | 0.2297 | -258.8416 | -253.8099 | -2.7976 | -2.8234 |

|

| 156 |

+

| 0.4908 | 0.1 | 200 | 0.5426 | -0.0279 | -0.6842 | 0.75 | 0.6563 | -263.8124 | -254.5145 | -2.7719 | -2.7960 |

|

| 157 |

+

| 0.5264 | 0.15 | 300 | 0.5324 | 0.0414 | -0.9793 | 0.7656 | 1.0207 | -266.7627 | -253.8209 | -2.7892 | -2.8122 |

|

| 158 |

+

| 0.5536 | 0.21 | 400 | 0.4957 | -0.0185 | -1.5276 | 0.7969 | 1.5091 | -272.2460 | -254.4203 | -2.8542 | -2.8764 |

|

| 159 |

+

| 0.5362 | 0.26 | 500 | 0.5031 | -0.2630 | -1.5917 | 0.7812 | 1.3287 | -272.8869 | -256.8653 | -2.8702 | -2.8958 |

|

| 160 |

+

| 0.5966 | 0.31 | 600 | 0.5963 | -0.2993 | -1.6491 | 0.7812 | 1.3499 | -273.4614 | -257.2279 | -2.8778 | -2.8986 |

|

| 161 |

+

| 0.5014 | 0.36 | 700 | 0.5382 | -0.2859 | -1.4750 | 0.75 | 1.1891 | -271.7204 | -257.0942 | -2.7659 | -2.7869 |

|

| 162 |

+

| 0.5334 | 0.41 | 800 | 0.5677 | -0.4289 | -1.8968 | 0.7969 | 1.4679 | -275.9378 | -258.5242 | -2.7053 | -2.7265 |

|

| 163 |

+

| 0.5251 | 0.46 | 900 | 0.5772 | -0.2116 | -1.3107 | 0.7344 | 1.0991 | -270.0768 | -256.3507 | -2.8463 | -2.8662 |

|

| 164 |

+

| 0.5205 | 0.52 | 1000 | 0.5262 | -0.3792 | -1.8585 | 0.7188 | 1.4793 | -275.5552 | -258.0276 | -2.7893 | -2.7979 |

|

| 165 |

+

| 0.5094 | 0.57 | 1100 | 0.5433 | -0.6279 | -1.9368 | 0.7969 | 1.3089 | -276.3377 | -260.5136 | -2.7453 | -2.7536 |

|

| 166 |

+

| 0.5837 | 0.62 | 1200 | 0.5349 | -0.3780 | -1.9584 | 0.7656 | 1.5804 | -276.5542 | -258.0154 | -2.7643 | -2.7756 |

|

| 167 |

+

| 0.5214 | 0.67 | 1300 | 0.5732 | -1.0055 | -2.2306 | 0.7656 | 1.2251 | -279.2761 | -264.2903 | -2.6986 | -2.7113 |

|

| 168 |

+

| 0.6914 | 0.72 | 1400 | 0.5137 | -0.6912 | -2.1775 | 0.7969 | 1.4863 | -278.7448 | -261.1467 | -2.7166 | -2.7275 |

|

| 169 |

+

| 0.4655 | 0.77 | 1500 | 0.5090 | -0.7987 | -2.2930 | 0.7031 | 1.4943 | -279.8999 | -262.2220 | -2.6651 | -2.6838 |

|

| 170 |

+

| 0.5731 | 0.83 | 1600 | 0.5312 | -0.8253 | -2.3520 | 0.7812 | 1.5268 | -280.4902 | -262.4876 | -2.6543 | -2.6728 |

|

| 171 |

+

| 0.5233 | 0.88 | 1700 | 0.5206 | -0.4573 | -2.0951 | 0.7812 | 1.6377 | -277.9205 | -258.8084 | -2.6870 | -2.7097 |

|

| 172 |

+

| 0.5593 | 0.93 | 1800 | 0.5231 | -0.5508 | -2.2000 | 0.7969 | 1.6492 | -278.9703 | -259.7433 | -2.6221 | -2.6519 |

|

| 173 |

+

| 0.4967 | 0.98 | 1900 | 0.5290 | -0.5340 | -1.9570 | 0.8281 | 1.4230 | -276.5395 | -259.5749 | -2.6564 | -2.6878 |

|

| 174 |

+

| 0.0921 | 1.03 | 2000 | 0.5368 | -1.1376 | -3.1615 | 0.7812 | 2.0239 | -288.5854 | -265.6111 | -2.6040 | -2.6345 |

|

| 175 |

+

| 0.0733 | 1.08 | 2100 | 0.5453 | -1.1045 | -3.4451 | 0.7656 | 2.3406 | -291.4208 | -265.2799 | -2.6289 | -2.6595 |

|

| 176 |

+

| 0.0972 | 1.14 | 2200 | 0.5571 | -1.6915 | -3.9823 | 0.8125 | 2.2908 | -296.7934 | -271.1505 | -2.6471 | -2.6709 |

|

| 177 |

+

| 0.1058 | 1.19 | 2300 | 0.5789 | -1.0621 | -3.8941 | 0.7969 | 2.8319 | -295.9106 | -264.8563 | -2.5527 | -2.5798 |

|

| 178 |

+

| 0.2423 | 1.24 | 2400 | 0.5455 | -1.1963 | -3.5590 | 0.7812 | 2.3627 | -292.5599 | -266.1981 | -2.5414 | -2.5784 |

|

| 179 |

+

| 0.1177 | 1.29 | 2500 | 0.5889 | -1.8141 | -4.3942 | 0.7969 | 2.5801 | -300.9120 | -272.3761 | -2.4802 | -2.5189 |

|

| 180 |

+

| 0.1213 | 1.34 | 2600 | 0.5683 | -1.4608 | -3.8420 | 0.8125 | 2.3812 | -295.3901 | -268.8436 | -2.4774 | -2.5207 |

|

| 181 |

+

| 0.0889 | 1.39 | 2700 | 0.5890 | -1.6007 | -3.7337 | 0.7812 | 2.1330 | -294.3068 | -270.2423 | -2.4123 | -2.4522 |

|

| 182 |

+

| 0.0995 | 1.45 | 2800 | 0.6073 | -1.5519 | -3.8362 | 0.8281 | 2.2843 | -295.3315 | -269.7538 | -2.4685 | -2.5050 |

|

| 183 |

+

| 0.1145 | 1.5 | 2900 | 0.5790 | -1.7939 | -4.2876 | 0.8438 | 2.4937 | -299.8461 | -272.1744 | -2.4272 | -2.4674 |

|

| 184 |

+

| 0.0644 | 1.55 | 3000 | 0.5735 | -1.7285 | -4.2051 | 0.8125 | 2.4766 | -299.0209 | -271.5201 | -2.4193 | -2.4574 |

|

| 185 |

+

| 0.0798 | 1.6 | 3100 | 0.5537 | -1.7226 | -4.2850 | 0.8438 | 2.5624 | -299.8200 | -271.4610 | -2.5367 | -2.5696 |

|

| 186 |

+

| 0.1013 | 1.65 | 3200 | 0.5575 | -1.5715 | -3.9813 | 0.875 | 2.4098 | -296.7825 | -269.9498 | -2.4926 | -2.5267 |

|

| 187 |

+

| 0.1254 | 1.7 | 3300 | 0.5905 | -1.6412 | -4.4703 | 0.8594 | 2.8291 | -301.6730 | -270.6473 | -2.5017 | -2.5340 |

|

| 188 |

+

| 0.085 | 1.76 | 3400 | 0.6133 | -1.9159 | -4.6760 | 0.8438 | 2.7601 | -303.7296 | -273.3941 | -2.4614 | -2.4960 |

|

| 189 |

+

| 0.065 | 1.81 | 3500 | 0.6074 | -1.8237 | -4.3525 | 0.8594 | 2.5288 | -300.4951 | -272.4724 | -2.4597 | -2.5004 |

|

| 190 |

+

| 0.0755 | 1.86 | 3600 | 0.5836 | -1.9252 | -4.4005 | 0.8125 | 2.4753 | -300.9748 | -273.4872 | -2.4327 | -2.4716 |

|

| 191 |

+

| 0.0746 | 1.91 | 3700 | 0.5789 | -1.9280 | -4.4906 | 0.8125 | 2.5626 | -301.8762 | -273.5149 | -2.4686 | -2.5115 |

|

| 192 |

+

| 0.1348 | 1.96 | 3800 | 0.6015 | -1.8658 | -4.2428 | 0.8281 | 2.3769 | -299.3976 | -272.8936 | -2.4943 | -2.5393 |

|

| 193 |

+

| 0.0217 | 2.01 | 3900 | 0.6122 | -2.3335 | -4.9229 | 0.8281 | 2.5894 | -306.1988 | -277.5699 | -2.4841 | -2.5272 |

|

| 194 |

+

| 0.0219 | 2.07 | 4000 | 0.6522 | -2.9890 | -6.0164 | 0.8281 | 3.0274 | -317.1334 | -284.1248 | -2.4105 | -2.4545 |

|

| 195 |

+

| 0.0119 | 2.12 | 4100 | 0.6922 | -3.4777 | -6.6749 | 0.7969 | 3.1972 | -323.7187 | -289.0121 | -2.4272 | -2.4699 |

|

| 196 |

+

| 0.0153 | 2.17 | 4200 | 0.6993 | -3.2406 | -6.6775 | 0.7969 | 3.4369 | -323.7453 | -286.6413 | -2.4047 | -2.4465 |

|

| 197 |

+

| 0.011 | 2.22 | 4300 | 0.7178 | -3.7991 | -7.4397 | 0.7656 | 3.6406 | -331.3667 | -292.2260 | -2.3843 | -2.4290 |

|

| 198 |

+

| 0.0072 | 2.27 | 4400 | 0.6840 | -3.3269 | -6.8021 | 0.8125 | 3.4752 | -324.9908 | -287.5042 | -2.4095 | -2.4536 |

|

| 199 |

+

| 0.0197 | 2.32 | 4500 | 0.7013 | -3.6890 | -7.3014 | 0.8125 | 3.6124 | -329.9841 | -291.1250 | -2.4118 | -2.4543 |

|

| 200 |

+

| 0.0182 | 2.37 | 4600 | 0.7476 | -3.8994 | -7.5366 | 0.8281 | 3.6372 | -332.3356 | -293.2291 | -2.4163 | -2.4565 |

|

| 201 |

+

| 0.0125 | 2.43 | 4700 | 0.7199 | -4.0560 | -7.5765 | 0.8438 | 3.5204 | -332.7345 | -294.7952 | -2.3699 | -2.4100 |

|

| 202 |

+

| 0.0082 | 2.48 | 4800 | 0.7048 | -3.6613 | -7.1356 | 0.875 | 3.4743 | -328.3255 | -290.8477 | -2.3925 | -2.4303 |

|

| 203 |

+

| 0.0118 | 2.53 | 4900 | 0.6976 | -3.7908 | -7.3152 | 0.8125 | 3.5244 | -330.1224 | -292.1431 | -2.3633 | -2.4047 |

|

| 204 |

+

| 0.0118 | 2.58 | 5000 | 0.7198 | -3.9049 | -7.5557 | 0.8281 | 3.6508 | -332.5271 | -293.2844 | -2.3764 | -2.4194 |

|

| 205 |

+

| 0.006 | 2.63 | 5100 | 0.7506 | -4.2118 | -7.9149 | 0.8125 | 3.7032 | -336.1194 | -296.3530 | -2.3407 | -2.3860 |

|

| 206 |

+

| 0.0143 | 2.68 | 5200 | 0.7408 | -4.2433 | -7.9802 | 0.8125 | 3.7369 | -336.7721 | -296.6682 | -2.3509 | -2.3946 |

|

| 207 |

+

| 0.0057 | 2.74 | 5300 | 0.7552 | -4.3392 | -8.0831 | 0.7969 | 3.7439 | -337.8013 | -297.6275 | -2.3388 | -2.3842 |

|

| 208 |

+

| 0.0138 | 2.79 | 5400 | 0.7404 | -4.2395 | -7.9762 | 0.8125 | 3.7367 | -336.7322 | -296.6304 | -2.3286 | -2.3737 |

|

| 209 |

+

| 0.0079 | 2.84 | 5500 | 0.7525 | -4.4466 | -8.2196 | 0.7812 | 3.7731 | -339.1662 | -298.7007 | -2.3200 | -2.3641 |

|

| 210 |

+

| 0.0077 | 2.89 | 5600 | 0.7520 | -4.5586 | -8.3485 | 0.7969 | 3.7899 | -340.4545 | -299.8206 | -2.3078 | -2.3517 |

|

| 211 |

+

| 0.0094 | 2.94 | 5700 | 0.7527 | -4.5542 | -8.3509 | 0.7812 | 3.7967 | -340.4790 | -299.7773 | -2.3062 | -2.3510 |

|

| 212 |

+

| 0.0054 | 2.99 | 5800 | 0.7520 | -4.5169 | -8.3079 | 0.7812 | 3.7911 | -340.0493 | -299.4038 | -2.3081 | -2.3530 |

|

| 213 |

+

|

| 214 |

+

|

| 215 |

+

### Framework versions

|

| 216 |

+

|

| 217 |

+

- Transformers 4.35.0.dev0

|

| 218 |

+

- Pytorch 2.0.1+cu118

|

| 219 |

+

- Datasets 2.12.0

|

| 220 |

+

- Tokenizers 0.14.0

|

| 221 |

+

|

| 222 |

+

## Citation

|

| 223 |

+

|

| 224 |

+

If you find Zephyr-7B-β is useful in your work, please cite it with:

|

| 225 |

+

|

| 226 |

+

```

|

| 227 |

+

@misc{tunstall2023zephyr,

|

| 228 |

+

title={Zephyr: Direct Distillation of LM Alignment},

|

| 229 |

+

author={Lewis Tunstall and Edward Beeching and Nathan Lambert and Nazneen Rajani and Kashif Rasul and Younes Belkada and Shengyi Huang and Leandro von Werra and Clémentine Fourrier and Nathan Habib and Nathan Sarrazin and Omar Sanseviero and Alexander M. Rush and Thomas Wolf},

|

| 230 |

+

year={2023},

|

| 231 |

+

eprint={2310.16944},

|

| 232 |

+

archivePrefix={arXiv},

|

| 233 |

+

primaryClass={cs.LG}

|

| 234 |

+

}

|

| 235 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</s>": 2,

|

| 3 |

+

"<s>": 1,

|

| 4 |

+

"<unk>": 0

|

| 5 |

+

}

|

all_results.json

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 3.0,

|

| 3 |

+

"eval_logits/chosen": -2.353081703186035,

|

| 4 |

+

"eval_logits/rejected": -2.308103084564209,

|

| 5 |

+

"eval_logps/chosen": -299.4560546875,

|

| 6 |

+

"eval_logps/rejected": -340.154052734375,

|

| 7 |

+

"eval_loss": 0.7496059536933899,

|

| 8 |

+

"eval_rewards/accuracies": 0.78125,

|

| 9 |

+

"eval_rewards/chosen": -4.522095203399658,

|

| 10 |

+

"eval_rewards/margins": 3.7963125705718994,

|

| 11 |

+

"eval_rewards/rejected": -8.318408012390137,

|

| 12 |

+

"eval_runtime": 48.0152,

|

| 13 |

+

"eval_samples": 1000,

|

| 14 |

+

"eval_samples_per_second": 20.827,

|

| 15 |

+

"eval_steps_per_second": 0.333,

|

| 16 |

+

"train_loss": 0.2172969928600547,

|

| 17 |

+

"train_runtime": 23865.9828,

|

| 18 |

+

"train_samples": 61966,

|

| 19 |

+

"train_samples_per_second": 7.789,

|

| 20 |

+

"train_steps_per_second": 0.243

|

| 21 |

+

}

|

cal_data.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:08be1103ff8fcef33b570f3c0f5ae4cc7f9dc5c3f264105baa55fc9b132ed1be

|

| 3 |

+

size 1638488

|

config.json

ADDED

|

@@ -0,0 +1,26 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "HuggingFaceH4/zephyr-7b-beta",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"MistralForCausalLM"

|

| 5 |

+

],

|

| 6 |

+

"bos_token_id": 1,

|

| 7 |

+

"eos_token_id": 2,

|

| 8 |

+

"hidden_act": "silu",

|

| 9 |

+

"hidden_size": 4096,

|

| 10 |

+

"initializer_range": 0.02,

|

| 11 |

+

"intermediate_size": 14336,

|

| 12 |

+

"max_position_embeddings": 32768,

|

| 13 |

+

"model_type": "mistral",

|

| 14 |

+

"num_attention_heads": 32,

|

| 15 |

+

"num_hidden_layers": 32,

|

| 16 |

+

"num_key_value_heads": 8,

|

| 17 |

+

"pad_token_id": 2,

|

| 18 |

+

"rms_norm_eps": 1e-05,

|

| 19 |

+

"rope_theta": 10000.0,

|

| 20 |

+

"sliding_window": 4096,

|

| 21 |

+

"tie_word_embeddings": false,

|

| 22 |

+

"torch_dtype": "bfloat16",

|

| 23 |

+

"transformers_version": "4.35.0.dev0",

|

| 24 |

+

"use_cache": true,

|

| 25 |

+

"vocab_size": 32000

|

| 26 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 3.0,

|

| 3 |

+

"eval_logits/chosen": -2.353081703186035,

|

| 4 |

+

"eval_logits/rejected": -2.308103084564209,

|

| 5 |

+

"eval_logps/chosen": -299.4560546875,

|

| 6 |

+

"eval_logps/rejected": -340.154052734375,

|

| 7 |

+

"eval_loss": 0.7496059536933899,

|

| 8 |

+

"eval_rewards/accuracies": 0.78125,

|

| 9 |

+

"eval_rewards/chosen": -4.522095203399658,

|

| 10 |

+

"eval_rewards/margins": 3.7963125705718994,

|

| 11 |

+

"eval_rewards/rejected": -8.318408012390137,

|

| 12 |

+

"eval_runtime": 48.0152,

|

| 13 |

+

"eval_samples": 1000,

|

| 14 |

+

"eval_samples_per_second": 20.827,

|

| 15 |

+

"eval_steps_per_second": 0.333

|

| 16 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 1,

|

| 4 |

+

"eos_token_id": 2,

|

| 5 |

+

"transformers_version": "4.35.0.dev0"

|

| 6 |

+

}

|

input_states.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:812e15620fe06f0af607595658098ca8344ac8d3c9351363374527312da5135d

|

| 3 |

+

size 1677721696

|

job.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

measurement.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,298 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 14483464192

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00008-of-00008.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00008.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00008.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00008.safetensors",

|

| 10 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00008.safetensors",

|

| 11 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00008.safetensors",

|

| 12 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00008.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00008.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00008.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00008.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00008.safetensors",

|

| 17 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00008.safetensors",

|

| 18 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00008.safetensors",

|

| 19 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00008.safetensors",

|

| 20 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00008.safetensors",

|

| 21 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00008.safetensors",

|

| 22 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00008.safetensors",

|

| 23 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00008.safetensors",

|

| 24 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00008.safetensors",

|

| 25 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00008.safetensors",

|

| 26 |

+

"model.layers.10.input_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 27 |

+

"model.layers.10.mlp.down_proj.weight": "model-00003-of-00008.safetensors",

|

| 28 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00003-of-00008.safetensors",

|

| 29 |

+

"model.layers.10.mlp.up_proj.weight": "model-00003-of-00008.safetensors",

|

| 30 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 31 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00003-of-00008.safetensors",

|

| 32 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00003-of-00008.safetensors",

|

| 33 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00003-of-00008.safetensors",

|

| 34 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00003-of-00008.safetensors",

|

| 35 |

+

"model.layers.11.input_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 36 |

+

"model.layers.11.mlp.down_proj.weight": "model-00003-of-00008.safetensors",

|

| 37 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00003-of-00008.safetensors",

|

| 38 |

+

"model.layers.11.mlp.up_proj.weight": "model-00003-of-00008.safetensors",

|

| 39 |

+

"model.layers.11.post_attention_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 40 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00003-of-00008.safetensors",

|

| 41 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00003-of-00008.safetensors",

|

| 42 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00003-of-00008.safetensors",

|

| 43 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00003-of-00008.safetensors",

|

| 44 |

+

"model.layers.12.input_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 45 |

+

"model.layers.12.mlp.down_proj.weight": "model-00004-of-00008.safetensors",

|

| 46 |

+

"model.layers.12.mlp.gate_proj.weight": "model-00003-of-00008.safetensors",

|

| 47 |

+

"model.layers.12.mlp.up_proj.weight": "model-00003-of-00008.safetensors",

|

| 48 |

+

"model.layers.12.post_attention_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 49 |

+

"model.layers.12.self_attn.k_proj.weight": "model-00003-of-00008.safetensors",

|

| 50 |

+

"model.layers.12.self_attn.o_proj.weight": "model-00003-of-00008.safetensors",

|

| 51 |

+

"model.layers.12.self_attn.q_proj.weight": "model-00003-of-00008.safetensors",

|

| 52 |

+

"model.layers.12.self_attn.v_proj.weight": "model-00003-of-00008.safetensors",

|

| 53 |

+

"model.layers.13.input_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 54 |

+

"model.layers.13.mlp.down_proj.weight": "model-00004-of-00008.safetensors",

|

| 55 |

+

"model.layers.13.mlp.gate_proj.weight": "model-00004-of-00008.safetensors",

|

| 56 |

+

"model.layers.13.mlp.up_proj.weight": "model-00004-of-00008.safetensors",

|

| 57 |

+

"model.layers.13.post_attention_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 58 |

+

"model.layers.13.self_attn.k_proj.weight": "model-00004-of-00008.safetensors",

|

| 59 |

+

"model.layers.13.self_attn.o_proj.weight": "model-00004-of-00008.safetensors",

|

| 60 |

+

"model.layers.13.self_attn.q_proj.weight": "model-00004-of-00008.safetensors",

|

| 61 |

+

"model.layers.13.self_attn.v_proj.weight": "model-00004-of-00008.safetensors",

|

| 62 |

+

"model.layers.14.input_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 63 |

+

"model.layers.14.mlp.down_proj.weight": "model-00004-of-00008.safetensors",

|

| 64 |

+

"model.layers.14.mlp.gate_proj.weight": "model-00004-of-00008.safetensors",

|

| 65 |

+

"model.layers.14.mlp.up_proj.weight": "model-00004-of-00008.safetensors",

|

| 66 |

+

"model.layers.14.post_attention_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 67 |

+

"model.layers.14.self_attn.k_proj.weight": "model-00004-of-00008.safetensors",

|

| 68 |

+

"model.layers.14.self_attn.o_proj.weight": "model-00004-of-00008.safetensors",

|

| 69 |

+

"model.layers.14.self_attn.q_proj.weight": "model-00004-of-00008.safetensors",

|

| 70 |

+

"model.layers.14.self_attn.v_proj.weight": "model-00004-of-00008.safetensors",

|

| 71 |

+

"model.layers.15.input_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 72 |

+

"model.layers.15.mlp.down_proj.weight": "model-00004-of-00008.safetensors",

|

| 73 |

+

"model.layers.15.mlp.gate_proj.weight": "model-00004-of-00008.safetensors",

|

| 74 |

+

"model.layers.15.mlp.up_proj.weight": "model-00004-of-00008.safetensors",

|

| 75 |

+

"model.layers.15.post_attention_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 76 |

+

"model.layers.15.self_attn.k_proj.weight": "model-00004-of-00008.safetensors",

|

| 77 |

+

"model.layers.15.self_attn.o_proj.weight": "model-00004-of-00008.safetensors",

|

| 78 |

+

"model.layers.15.self_attn.q_proj.weight": "model-00004-of-00008.safetensors",

|

| 79 |

+

"model.layers.15.self_attn.v_proj.weight": "model-00004-of-00008.safetensors",

|

| 80 |

+

"model.layers.16.input_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 81 |

+

"model.layers.16.mlp.down_proj.weight": "model-00004-of-00008.safetensors",

|

| 82 |

+

"model.layers.16.mlp.gate_proj.weight": "model-00004-of-00008.safetensors",

|

| 83 |

+

"model.layers.16.mlp.up_proj.weight": "model-00004-of-00008.safetensors",

|

| 84 |

+

"model.layers.16.post_attention_layernorm.weight": "model-00004-of-00008.safetensors",

|

| 85 |

+

"model.layers.16.self_attn.k_proj.weight": "model-00004-of-00008.safetensors",

|

| 86 |

+

"model.layers.16.self_attn.o_proj.weight": "model-00004-of-00008.safetensors",

|

| 87 |

+

"model.layers.16.self_attn.q_proj.weight": "model-00004-of-00008.safetensors",

|

| 88 |

+

"model.layers.16.self_attn.v_proj.weight": "model-00004-of-00008.safetensors",

|

| 89 |

+

"model.layers.17.input_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 90 |

+

"model.layers.17.mlp.down_proj.weight": "model-00005-of-00008.safetensors",

|

| 91 |

+

"model.layers.17.mlp.gate_proj.weight": "model-00005-of-00008.safetensors",

|

| 92 |

+

"model.layers.17.mlp.up_proj.weight": "model-00005-of-00008.safetensors",

|

| 93 |

+

"model.layers.17.post_attention_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 94 |

+

"model.layers.17.self_attn.k_proj.weight": "model-00004-of-00008.safetensors",

|

| 95 |

+

"model.layers.17.self_attn.o_proj.weight": "model-00004-of-00008.safetensors",

|

| 96 |

+

"model.layers.17.self_attn.q_proj.weight": "model-00004-of-00008.safetensors",

|

| 97 |

+

"model.layers.17.self_attn.v_proj.weight": "model-00004-of-00008.safetensors",

|

| 98 |

+

"model.layers.18.input_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 99 |

+

"model.layers.18.mlp.down_proj.weight": "model-00005-of-00008.safetensors",

|

| 100 |

+

"model.layers.18.mlp.gate_proj.weight": "model-00005-of-00008.safetensors",

|

| 101 |

+

"model.layers.18.mlp.up_proj.weight": "model-00005-of-00008.safetensors",

|

| 102 |

+

"model.layers.18.post_attention_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 103 |

+

"model.layers.18.self_attn.k_proj.weight": "model-00005-of-00008.safetensors",

|

| 104 |

+

"model.layers.18.self_attn.o_proj.weight": "model-00005-of-00008.safetensors",

|

| 105 |

+

"model.layers.18.self_attn.q_proj.weight": "model-00005-of-00008.safetensors",

|

| 106 |

+

"model.layers.18.self_attn.v_proj.weight": "model-00005-of-00008.safetensors",

|

| 107 |

+

"model.layers.19.input_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 108 |

+

"model.layers.19.mlp.down_proj.weight": "model-00005-of-00008.safetensors",

|

| 109 |

+

"model.layers.19.mlp.gate_proj.weight": "model-00005-of-00008.safetensors",

|

| 110 |

+

"model.layers.19.mlp.up_proj.weight": "model-00005-of-00008.safetensors",

|

| 111 |

+

"model.layers.19.post_attention_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 112 |

+

"model.layers.19.self_attn.k_proj.weight": "model-00005-of-00008.safetensors",

|

| 113 |

+

"model.layers.19.self_attn.o_proj.weight": "model-00005-of-00008.safetensors",

|

| 114 |

+

"model.layers.19.self_attn.q_proj.weight": "model-00005-of-00008.safetensors",

|

| 115 |

+

"model.layers.19.self_attn.v_proj.weight": "model-00005-of-00008.safetensors",

|

| 116 |

+

"model.layers.2.input_layernorm.weight": "model-00001-of-00008.safetensors",

|

| 117 |

+

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00008.safetensors",

|

| 118 |

+

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00008.safetensors",

|

| 119 |

+

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00008.safetensors",

|

| 120 |

+

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00008.safetensors",

|

| 121 |

+

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00008.safetensors",

|

| 122 |

+

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00008.safetensors",

|

| 123 |

+

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00008.safetensors",

|

| 124 |

+

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00008.safetensors",

|

| 125 |

+

"model.layers.20.input_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 126 |

+

"model.layers.20.mlp.down_proj.weight": "model-00005-of-00008.safetensors",

|

| 127 |

+

"model.layers.20.mlp.gate_proj.weight": "model-00005-of-00008.safetensors",

|

| 128 |

+

"model.layers.20.mlp.up_proj.weight": "model-00005-of-00008.safetensors",

|

| 129 |

+

"model.layers.20.post_attention_layernorm.weight": "model-00005-of-00008.safetensors",

|

| 130 |

+

"model.layers.20.self_attn.k_proj.weight": "model-00005-of-00008.safetensors",

|

| 131 |

+

"model.layers.20.self_attn.o_proj.weight": "model-00005-of-00008.safetensors",

|

| 132 |

+

"model.layers.20.self_attn.q_proj.weight": "model-00005-of-00008.safetensors",

|

| 133 |

+

"model.layers.20.self_attn.v_proj.weight": "model-00005-of-00008.safetensors",

|

| 134 |

+

"model.layers.21.input_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 135 |

+

"model.layers.21.mlp.down_proj.weight": "model-00006-of-00008.safetensors",

|

| 136 |

+

"model.layers.21.mlp.gate_proj.weight": "model-00005-of-00008.safetensors",

|

| 137 |

+

"model.layers.21.mlp.up_proj.weight": "model-00005-of-00008.safetensors",

|

| 138 |

+

"model.layers.21.post_attention_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 139 |

+

"model.layers.21.self_attn.k_proj.weight": "model-00005-of-00008.safetensors",

|

| 140 |

+

"model.layers.21.self_attn.o_proj.weight": "model-00005-of-00008.safetensors",

|

| 141 |

+

"model.layers.21.self_attn.q_proj.weight": "model-00005-of-00008.safetensors",

|

| 142 |

+

"model.layers.21.self_attn.v_proj.weight": "model-00005-of-00008.safetensors",

|

| 143 |

+

"model.layers.22.input_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 144 |

+

"model.layers.22.mlp.down_proj.weight": "model-00006-of-00008.safetensors",

|

| 145 |

+

"model.layers.22.mlp.gate_proj.weight": "model-00006-of-00008.safetensors",

|

| 146 |

+

"model.layers.22.mlp.up_proj.weight": "model-00006-of-00008.safetensors",

|

| 147 |

+

"model.layers.22.post_attention_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 148 |

+

"model.layers.22.self_attn.k_proj.weight": "model-00006-of-00008.safetensors",

|

| 149 |

+

"model.layers.22.self_attn.o_proj.weight": "model-00006-of-00008.safetensors",

|

| 150 |

+

"model.layers.22.self_attn.q_proj.weight": "model-00006-of-00008.safetensors",

|

| 151 |

+

"model.layers.22.self_attn.v_proj.weight": "model-00006-of-00008.safetensors",

|

| 152 |

+

"model.layers.23.input_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 153 |

+

"model.layers.23.mlp.down_proj.weight": "model-00006-of-00008.safetensors",

|

| 154 |

+

"model.layers.23.mlp.gate_proj.weight": "model-00006-of-00008.safetensors",

|

| 155 |

+

"model.layers.23.mlp.up_proj.weight": "model-00006-of-00008.safetensors",

|

| 156 |

+

"model.layers.23.post_attention_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 157 |

+

"model.layers.23.self_attn.k_proj.weight": "model-00006-of-00008.safetensors",

|

| 158 |

+

"model.layers.23.self_attn.o_proj.weight": "model-00006-of-00008.safetensors",

|

| 159 |

+

"model.layers.23.self_attn.q_proj.weight": "model-00006-of-00008.safetensors",

|

| 160 |

+

"model.layers.23.self_attn.v_proj.weight": "model-00006-of-00008.safetensors",

|

| 161 |

+

"model.layers.24.input_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 162 |

+

"model.layers.24.mlp.down_proj.weight": "model-00006-of-00008.safetensors",

|

| 163 |

+

"model.layers.24.mlp.gate_proj.weight": "model-00006-of-00008.safetensors",

|

| 164 |

+

"model.layers.24.mlp.up_proj.weight": "model-00006-of-00008.safetensors",

|

| 165 |

+

"model.layers.24.post_attention_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 166 |

+

"model.layers.24.self_attn.k_proj.weight": "model-00006-of-00008.safetensors",

|

| 167 |

+

"model.layers.24.self_attn.o_proj.weight": "model-00006-of-00008.safetensors",

|

| 168 |

+

"model.layers.24.self_attn.q_proj.weight": "model-00006-of-00008.safetensors",

|

| 169 |

+

"model.layers.24.self_attn.v_proj.weight": "model-00006-of-00008.safetensors",

|

| 170 |

+

"model.layers.25.input_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 171 |

+

"model.layers.25.mlp.down_proj.weight": "model-00006-of-00008.safetensors",

|

| 172 |

+

"model.layers.25.mlp.gate_proj.weight": "model-00006-of-00008.safetensors",

|

| 173 |

+

"model.layers.25.mlp.up_proj.weight": "model-00006-of-00008.safetensors",

|

| 174 |

+

"model.layers.25.post_attention_layernorm.weight": "model-00006-of-00008.safetensors",

|

| 175 |

+

"model.layers.25.self_attn.k_proj.weight": "model-00006-of-00008.safetensors",

|

| 176 |

+

"model.layers.25.self_attn.o_proj.weight": "model-00006-of-00008.safetensors",

|

| 177 |

+

"model.layers.25.self_attn.q_proj.weight": "model-00006-of-00008.safetensors",

|

| 178 |

+

"model.layers.25.self_attn.v_proj.weight": "model-00006-of-00008.safetensors",

|

| 179 |

+

"model.layers.26.input_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 180 |

+

"model.layers.26.mlp.down_proj.weight": "model-00007-of-00008.safetensors",

|

| 181 |

+

"model.layers.26.mlp.gate_proj.weight": "model-00007-of-00008.safetensors",

|

| 182 |

+

"model.layers.26.mlp.up_proj.weight": "model-00007-of-00008.safetensors",

|

| 183 |

+

"model.layers.26.post_attention_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 184 |

+

"model.layers.26.self_attn.k_proj.weight": "model-00006-of-00008.safetensors",

|

| 185 |

+

"model.layers.26.self_attn.o_proj.weight": "model-00006-of-00008.safetensors",

|

| 186 |

+

"model.layers.26.self_attn.q_proj.weight": "model-00006-of-00008.safetensors",

|

| 187 |

+

"model.layers.26.self_attn.v_proj.weight": "model-00006-of-00008.safetensors",

|

| 188 |

+

"model.layers.27.input_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 189 |

+

"model.layers.27.mlp.down_proj.weight": "model-00007-of-00008.safetensors",

|

| 190 |

+

"model.layers.27.mlp.gate_proj.weight": "model-00007-of-00008.safetensors",

|

| 191 |

+

"model.layers.27.mlp.up_proj.weight": "model-00007-of-00008.safetensors",

|

| 192 |

+

"model.layers.27.post_attention_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 193 |

+

"model.layers.27.self_attn.k_proj.weight": "model-00007-of-00008.safetensors",

|

| 194 |

+

"model.layers.27.self_attn.o_proj.weight": "model-00007-of-00008.safetensors",

|

| 195 |

+

"model.layers.27.self_attn.q_proj.weight": "model-00007-of-00008.safetensors",

|

| 196 |

+

"model.layers.27.self_attn.v_proj.weight": "model-00007-of-00008.safetensors",

|

| 197 |

+

"model.layers.28.input_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 198 |

+

"model.layers.28.mlp.down_proj.weight": "model-00007-of-00008.safetensors",

|

| 199 |

+

"model.layers.28.mlp.gate_proj.weight": "model-00007-of-00008.safetensors",

|

| 200 |

+

"model.layers.28.mlp.up_proj.weight": "model-00007-of-00008.safetensors",

|

| 201 |

+

"model.layers.28.post_attention_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 202 |

+

"model.layers.28.self_attn.k_proj.weight": "model-00007-of-00008.safetensors",

|

| 203 |

+

"model.layers.28.self_attn.o_proj.weight": "model-00007-of-00008.safetensors",

|

| 204 |

+

"model.layers.28.self_attn.q_proj.weight": "model-00007-of-00008.safetensors",

|

| 205 |

+

"model.layers.28.self_attn.v_proj.weight": "model-00007-of-00008.safetensors",

|

| 206 |

+

"model.layers.29.input_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 207 |

+

"model.layers.29.mlp.down_proj.weight": "model-00007-of-00008.safetensors",

|

| 208 |

+

"model.layers.29.mlp.gate_proj.weight": "model-00007-of-00008.safetensors",

|

| 209 |

+

"model.layers.29.mlp.up_proj.weight": "model-00007-of-00008.safetensors",

|

| 210 |

+

"model.layers.29.post_attention_layernorm.weight": "model-00007-of-00008.safetensors",

|

| 211 |

+

"model.layers.29.self_attn.k_proj.weight": "model-00007-of-00008.safetensors",

|

| 212 |

+

"model.layers.29.self_attn.o_proj.weight": "model-00007-of-00008.safetensors",

|

| 213 |

+

"model.layers.29.self_attn.q_proj.weight": "model-00007-of-00008.safetensors",

|

| 214 |

+

"model.layers.29.self_attn.v_proj.weight": "model-00007-of-00008.safetensors",

|

| 215 |

+

"model.layers.3.input_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 216 |

+

"model.layers.3.mlp.down_proj.weight": "model-00002-of-00008.safetensors",

|

| 217 |

+

"model.layers.3.mlp.gate_proj.weight": "model-00001-of-00008.safetensors",

|

| 218 |

+

"model.layers.3.mlp.up_proj.weight": "model-00001-of-00008.safetensors",

|

| 219 |

+

"model.layers.3.post_attention_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 220 |

+

"model.layers.3.self_attn.k_proj.weight": "model-00001-of-00008.safetensors",

|

| 221 |

+

"model.layers.3.self_attn.o_proj.weight": "model-00001-of-00008.safetensors",

|

| 222 |

+

"model.layers.3.self_attn.q_proj.weight": "model-00001-of-00008.safetensors",

|

| 223 |

+

"model.layers.3.self_attn.v_proj.weight": "model-00001-of-00008.safetensors",

|

| 224 |

+

"model.layers.30.input_layernorm.weight": "model-00008-of-00008.safetensors",

|

| 225 |

+

"model.layers.30.mlp.down_proj.weight": "model-00008-of-00008.safetensors",

|

| 226 |

+

"model.layers.30.mlp.gate_proj.weight": "model-00007-of-00008.safetensors",

|

| 227 |

+

"model.layers.30.mlp.up_proj.weight": "model-00007-of-00008.safetensors",

|

| 228 |

+

"model.layers.30.post_attention_layernorm.weight": "model-00008-of-00008.safetensors",

|

| 229 |

+

"model.layers.30.self_attn.k_proj.weight": "model-00007-of-00008.safetensors",

|

| 230 |

+

"model.layers.30.self_attn.o_proj.weight": "model-00007-of-00008.safetensors",

|

| 231 |

+

"model.layers.30.self_attn.q_proj.weight": "model-00007-of-00008.safetensors",

|

| 232 |

+

"model.layers.30.self_attn.v_proj.weight": "model-00007-of-00008.safetensors",

|

| 233 |

+

"model.layers.31.input_layernorm.weight": "model-00008-of-00008.safetensors",

|

| 234 |

+

"model.layers.31.mlp.down_proj.weight": "model-00008-of-00008.safetensors",

|

| 235 |

+

"model.layers.31.mlp.gate_proj.weight": "model-00008-of-00008.safetensors",

|

| 236 |

+

"model.layers.31.mlp.up_proj.weight": "model-00008-of-00008.safetensors",

|

| 237 |

+

"model.layers.31.post_attention_layernorm.weight": "model-00008-of-00008.safetensors",

|

| 238 |

+

"model.layers.31.self_attn.k_proj.weight": "model-00008-of-00008.safetensors",

|

| 239 |

+

"model.layers.31.self_attn.o_proj.weight": "model-00008-of-00008.safetensors",

|

| 240 |

+

"model.layers.31.self_attn.q_proj.weight": "model-00008-of-00008.safetensors",

|

| 241 |

+

"model.layers.31.self_attn.v_proj.weight": "model-00008-of-00008.safetensors",

|

| 242 |

+

"model.layers.4.input_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 243 |

+

"model.layers.4.mlp.down_proj.weight": "model-00002-of-00008.safetensors",

|

| 244 |

+

"model.layers.4.mlp.gate_proj.weight": "model-00002-of-00008.safetensors",

|

| 245 |

+

"model.layers.4.mlp.up_proj.weight": "model-00002-of-00008.safetensors",

|

| 246 |

+

"model.layers.4.post_attention_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 247 |

+

"model.layers.4.self_attn.k_proj.weight": "model-00002-of-00008.safetensors",

|

| 248 |

+

"model.layers.4.self_attn.o_proj.weight": "model-00002-of-00008.safetensors",

|

| 249 |

+

"model.layers.4.self_attn.q_proj.weight": "model-00002-of-00008.safetensors",

|

| 250 |

+

"model.layers.4.self_attn.v_proj.weight": "model-00002-of-00008.safetensors",

|

| 251 |

+

"model.layers.5.input_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 252 |

+

"model.layers.5.mlp.down_proj.weight": "model-00002-of-00008.safetensors",

|

| 253 |

+

"model.layers.5.mlp.gate_proj.weight": "model-00002-of-00008.safetensors",

|

| 254 |

+

"model.layers.5.mlp.up_proj.weight": "model-00002-of-00008.safetensors",

|

| 255 |

+

"model.layers.5.post_attention_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 256 |

+

"model.layers.5.self_attn.k_proj.weight": "model-00002-of-00008.safetensors",

|

| 257 |

+

"model.layers.5.self_attn.o_proj.weight": "model-00002-of-00008.safetensors",

|

| 258 |

+

"model.layers.5.self_attn.q_proj.weight": "model-00002-of-00008.safetensors",

|

| 259 |

+

"model.layers.5.self_attn.v_proj.weight": "model-00002-of-00008.safetensors",

|

| 260 |

+

"model.layers.6.input_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 261 |

+

"model.layers.6.mlp.down_proj.weight": "model-00002-of-00008.safetensors",

|

| 262 |

+

"model.layers.6.mlp.gate_proj.weight": "model-00002-of-00008.safetensors",

|

| 263 |

+

"model.layers.6.mlp.up_proj.weight": "model-00002-of-00008.safetensors",

|

| 264 |

+

"model.layers.6.post_attention_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 265 |

+

"model.layers.6.self_attn.k_proj.weight": "model-00002-of-00008.safetensors",

|

| 266 |

+

"model.layers.6.self_attn.o_proj.weight": "model-00002-of-00008.safetensors",

|

| 267 |

+

"model.layers.6.self_attn.q_proj.weight": "model-00002-of-00008.safetensors",

|

| 268 |

+

"model.layers.6.self_attn.v_proj.weight": "model-00002-of-00008.safetensors",

|

| 269 |

+

"model.layers.7.input_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 270 |

+

"model.layers.7.mlp.down_proj.weight": "model-00002-of-00008.safetensors",

|

| 271 |

+

"model.layers.7.mlp.gate_proj.weight": "model-00002-of-00008.safetensors",

|

| 272 |

+

"model.layers.7.mlp.up_proj.weight": "model-00002-of-00008.safetensors",

|

| 273 |

+

"model.layers.7.post_attention_layernorm.weight": "model-00002-of-00008.safetensors",

|

| 274 |

+

"model.layers.7.self_attn.k_proj.weight": "model-00002-of-00008.safetensors",

|

| 275 |

+

"model.layers.7.self_attn.o_proj.weight": "model-00002-of-00008.safetensors",

|

| 276 |

+

"model.layers.7.self_attn.q_proj.weight": "model-00002-of-00008.safetensors",

|

| 277 |

+

"model.layers.7.self_attn.v_proj.weight": "model-00002-of-00008.safetensors",

|

| 278 |

+

"model.layers.8.input_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 279 |

+

"model.layers.8.mlp.down_proj.weight": "model-00003-of-00008.safetensors",

|

| 280 |

+

"model.layers.8.mlp.gate_proj.weight": "model-00003-of-00008.safetensors",

|

| 281 |

+

"model.layers.8.mlp.up_proj.weight": "model-00003-of-00008.safetensors",

|

| 282 |

+

"model.layers.8.post_attention_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 283 |

+

"model.layers.8.self_attn.k_proj.weight": "model-00002-of-00008.safetensors",

|

| 284 |

+

"model.layers.8.self_attn.o_proj.weight": "model-00002-of-00008.safetensors",

|

| 285 |

+

"model.layers.8.self_attn.q_proj.weight": "model-00002-of-00008.safetensors",

|

| 286 |

+

"model.layers.8.self_attn.v_proj.weight": "model-00002-of-00008.safetensors",

|

| 287 |

+

"model.layers.9.input_layernorm.weight": "model-00003-of-00008.safetensors",

|

| 288 |

+

"model.layers.9.mlp.down_proj.weight": "model-00003-of-00008.safetensors",

|

| 289 |

+