Update README.md

Browse files

README.md

CHANGED

|

@@ -1,3 +1,71 @@

|

|

| 1 |

---

|

| 2 |

license: apache-2.0

|

| 3 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

license: apache-2.0

|

| 3 |

---

|

| 4 |

+

# Positive Transfer Of The Whisper Speech Transformer To Human And Animal Voice Activity Detection

|

| 5 |

+

We proposed **WhisperSeg**, utilizing the Whisper Transformer pre-trained for Automatic Speech Recognition (ASR) for both human and animal Voice Activity Detection (VAD). For more details, please refer to our paper:

|

| 6 |

+

> [**Positive Transfer of the Whisper Speech Transformer to Human and Animal Voice Activity Detection**](https://doi.org/10.1101/2023.09.30.560270)

|

| 7 |

+

>

|

| 8 |

+

> Nianlong Gu, Kanghwi Lee, Maris Basha, Sumit Kumar Ram, Guanghao You, Richard H. R. Hahnloser <br>

|

| 9 |

+

> University of Zurich and ETH Zurich

|

| 10 |

+

|

| 11 |

+

*Accepted to the 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP 2024)*

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

The model "nccratliri/whisperseg-base-animal-vad" is the checkpoint of the multi-species WhisperSeg-base that was finetuned on the vocal segmentation datasets of five species.

|

| 15 |

+

|

| 16 |

+

## Usage

|

| 17 |

+

### Clone the GitHub repo and install dependencies

|

| 18 |

+

```bash

|

| 19 |

+

git clone https://github.com/nianlonggu/WhisperSeg.git

|

| 20 |

+

cd WhisperSeg; pip install -r requirements.txt

|

| 21 |

+

```

|

| 22 |

+

|

| 23 |

+

Then in the folder "WhisperSeg", run the following python script:

|

| 24 |

+

```python

|

| 25 |

+

from model import WhisperSegmenter

|

| 26 |

+

import librosa

|

| 27 |

+

import json

|

| 28 |

+

segmenter = WhisperSegmenter( "nccratliri/whisperseg-base-animal-vad", device="cuda" )

|

| 29 |

+

|

| 30 |

+

sr = 32000

|

| 31 |

+

spec_time_step = 0.0025

|

| 32 |

+

|

| 33 |

+

audio, _ = librosa.load( "data/example_subset/Zebra_finch/test_adults/zebra_finch_g17y2U-f00007.wav",

|

| 34 |

+

sr = sr )

|

| 35 |

+

## Note if spec_time_step is not provided, a default value will be used by the model.

|

| 36 |

+

prediction = segmenter.segment( audio, sr = sr, spec_time_step = spec_time_step )

|

| 37 |

+

print(prediction)

|

| 38 |

+

```

|

| 39 |

+

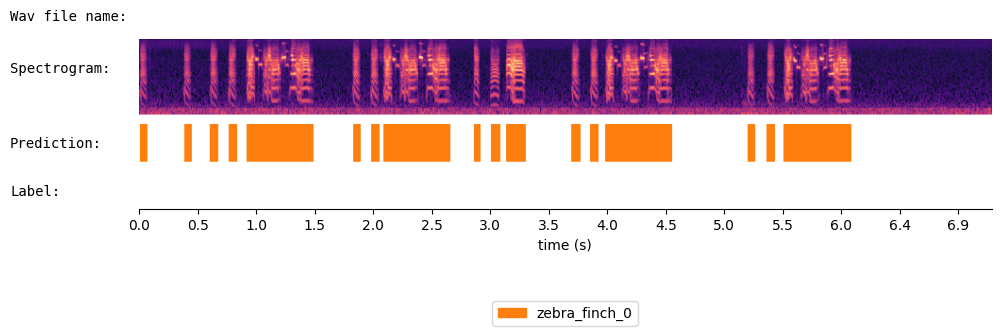

{'onset': [0.01, 0.38, 0.603, 0.758, 0.912, 1.813, 1.967, 2.073, 2.838, 2.982, 3.112, 3.668, 3.828, 3.953, 5.158, 5.323, 5.467], 'offset': [0.073, 0.447, 0.673, 0.83, 1.483, 1.882, 2.037, 2.643, 2.893, 3.063, 3.283, 3.742, 3.898, 4.523, 5.223, 5.393, 6.043], 'cluster': ['zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0', 'zebra_finch_0']}

|

| 40 |

+

|

| 41 |

+

Visualize the results of WhisperSeg:

|

| 42 |

+

```python

|

| 43 |

+

from audio_utils import SpecViewer

|

| 44 |

+

spec_viewer = SpecViewer()

|

| 45 |

+

spec_viewer.visualize( audio = audio, sr = sr, min_frequency= min_frequency, prediction = prediction,

|

| 46 |

+

window_size=8, precision_bits=1

|

| 47 |

+

)

|

| 48 |

+

```

|

| 49 |

+

|

| 50 |

+

|

| 51 |

+

Run it in Google Colab:

|

| 52 |

+

<a href="https://colab.research.google.com/github/nianlonggu/WhisperSeg/blob/master/docs/WhisperSeg_Voice_Activity_Detection_Demo.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

|

| 53 |

+

For more details, please refer to the GitHub repository: https://github.com/nianlonggu/WhisperSeg

|

| 54 |

+

|

| 55 |

+

## Citation

|

| 56 |

+

When using our code or models for your work, please cite the following paper:

|

| 57 |

+

```

|

| 58 |

+

@INPROCEEDINGS{10447620,

|

| 59 |

+

author={Gu, Nianlong and Lee, Kanghwi and Basha, Maris and Kumar Ram, Sumit and You, Guanghao and Hahnloser, Richard H. R.},

|

| 60 |

+

booktitle={ICASSP 2024 - 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP)},

|

| 61 |

+

title={Positive Transfer of the Whisper Speech Transformer to Human and Animal Voice Activity Detection},

|

| 62 |

+

year={2024},

|

| 63 |

+

volume={},

|

| 64 |

+

number={},

|

| 65 |

+

pages={7505-7509},

|

| 66 |

+

keywords={Voice activity detection;Adaptation models;Animals;Transformers;Acoustics;Human voice;Spectrogram;Voice activity detection;audio segmentation;Transformer;Whisper},

|

| 67 |

+

doi={10.1109/ICASSP48485.2024.10447620}}

|

| 68 |

+

```

|

| 69 |

+

|

| 70 |

+

## Contact

|

| 71 |

+

nianlong.gu@uzh.ch

|