init

Browse files- .gitignore +141 -0

- app.py +38 -0

- cyclegan.py +116 -0

- img/7134850@N05_identity_2@7720949260_0.jpg +0 -0

- img/7134850@N05_identity_2@7720963358_0.jpg +0 -0

- img/7134850@N05_identity_2@8978938957_3.jpg +0 -0

- img/7134850@N05_identity_2@8980174892_1.jpg +0 -0

- img/7154980@N03_identity_0@2379147786_0.jpg +0 -0

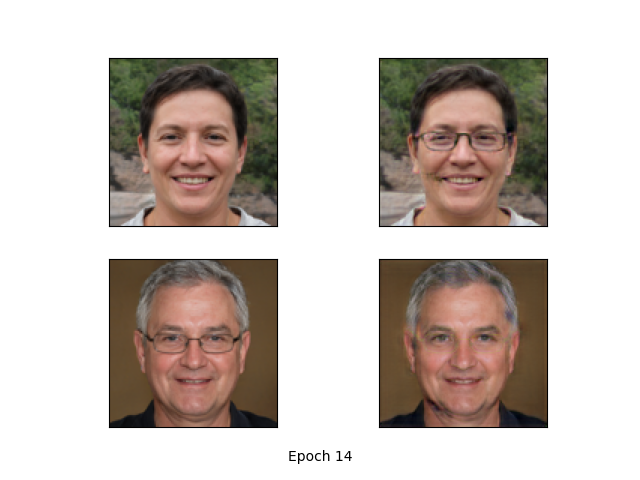

- img/epoch_14_results.png +0 -0

- nets/__init__.py +0 -0

- nets/cyclegan.py +923 -0

- nets/resnest/__init__.py +2 -0

- nets/resnest/ablation.py +106 -0

- nets/resnest/resnest.py +60 -0

- nets/resnest/resnet.py +310 -0

- nets/resnest/splat.py +99 -0

- utils/__init__.py +0 -0

- utils/callbacks.py +65 -0

- utils/dataloader.py +45 -0

- utils/utils.py +136 -0

- utils/utils_fit.py +249 -0

.gitignore

ADDED

|

@@ -0,0 +1,141 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# ignore map, miou, datasets

|

| 2 |

+

map_out/

|

| 3 |

+

miou_out/

|

| 4 |

+

VOCdevkit/

|

| 5 |

+

datasets/

|

| 6 |

+

Medical_Datasets/

|

| 7 |

+

lfw/

|

| 8 |

+

logs/

|

| 9 |

+

model_data/

|

| 10 |

+

.temp_map_out/

|

| 11 |

+

results/

|

| 12 |

+

|

| 13 |

+

# Byte-compiled / optimized / DLL files

|

| 14 |

+

__pycache__/

|

| 15 |

+

*.py[cod]

|

| 16 |

+

*$py.class

|

| 17 |

+

|

| 18 |

+

# C extensions

|

| 19 |

+

*.so

|

| 20 |

+

|

| 21 |

+

# Distribution / packaging

|

| 22 |

+

.Python

|

| 23 |

+

build/

|

| 24 |

+

develop-eggs/

|

| 25 |

+

dist/

|

| 26 |

+

downloads/

|

| 27 |

+

eggs/

|

| 28 |

+

.eggs/

|

| 29 |

+

lib/

|

| 30 |

+

lib64/

|

| 31 |

+

parts/

|

| 32 |

+

sdist/

|

| 33 |

+

var/

|

| 34 |

+

wheels/

|

| 35 |

+

pip-wheel-metadata/

|

| 36 |

+

share/python-wheels/

|

| 37 |

+

*.egg-info/

|

| 38 |

+

.installed.cfg

|

| 39 |

+

*.egg

|

| 40 |

+

MANIFEST

|

| 41 |

+

|

| 42 |

+

# PyInstaller

|

| 43 |

+

# Usually these files are written by a python script from a template

|

| 44 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 45 |

+

*.manifest

|

| 46 |

+

*.spec

|

| 47 |

+

|

| 48 |

+

# Installer logs

|

| 49 |

+

pip-log.txt

|

| 50 |

+

pip-delete-this-directory.txt

|

| 51 |

+

|

| 52 |

+

# Unit test / coverage reports

|

| 53 |

+

htmlcov/

|

| 54 |

+

.tox/

|

| 55 |

+

.nox/

|

| 56 |

+

.coverage

|

| 57 |

+

.coverage.*

|

| 58 |

+

.cache

|

| 59 |

+

nosetests.xml

|

| 60 |

+

coverage.xml

|

| 61 |

+

*.cover

|

| 62 |

+

*.py,cover

|

| 63 |

+

.hypothesis/

|

| 64 |

+

.pytest_cache/

|

| 65 |

+

|

| 66 |

+

# Translations

|

| 67 |

+

*.mo

|

| 68 |

+

*.pot

|

| 69 |

+

|

| 70 |

+

# Django stuff:

|

| 71 |

+

*.log

|

| 72 |

+

local_settings.py

|

| 73 |

+

db.sqlite3

|

| 74 |

+

db.sqlite3-journal

|

| 75 |

+

|

| 76 |

+

# Flask stuff:

|

| 77 |

+

instance/

|

| 78 |

+

.webassets-cache

|

| 79 |

+

|

| 80 |

+

# Scrapy stuff:

|

| 81 |

+

.scrapy

|

| 82 |

+

|

| 83 |

+

# Sphinx documentation

|

| 84 |

+

docs/_build/

|

| 85 |

+

|

| 86 |

+

# PyBuilder

|

| 87 |

+

target/

|

| 88 |

+

|

| 89 |

+

# Jupyter Notebook

|

| 90 |

+

.ipynb_checkpoints

|

| 91 |

+

|

| 92 |

+

# IPython

|

| 93 |

+

profile_default/

|

| 94 |

+

ipython_config.py

|

| 95 |

+

|

| 96 |

+

# pyenv

|

| 97 |

+

.python-version

|

| 98 |

+

|

| 99 |

+

# pipenv

|

| 100 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 101 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 102 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 103 |

+

# install all needed dependencies.

|

| 104 |

+

#Pipfile.lock

|

| 105 |

+

|

| 106 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

|

| 107 |

+

__pypackages__/

|

| 108 |

+

|

| 109 |

+

# Celery stuff

|

| 110 |

+

celerybeat-schedule

|

| 111 |

+

celerybeat.pid

|

| 112 |

+

|

| 113 |

+

# SageMath parsed files

|

| 114 |

+

*.sage.py

|

| 115 |

+

|

| 116 |

+

# Environments

|

| 117 |

+

.env

|

| 118 |

+

.venv

|

| 119 |

+

env/

|

| 120 |

+

venv/

|

| 121 |

+

ENV/

|

| 122 |

+

env.bak/

|

| 123 |

+

venv.bak/

|

| 124 |

+

|

| 125 |

+

# Spyder project settings

|

| 126 |

+

.spyderproject

|

| 127 |

+

.spyproject

|

| 128 |

+

|

| 129 |

+

# Rope project settings

|

| 130 |

+

.ropeproject

|

| 131 |

+

|

| 132 |

+

# mkdocs documentation

|

| 133 |

+

/site

|

| 134 |

+

|

| 135 |

+

# mypy

|

| 136 |

+

.mypy_cache/

|

| 137 |

+

.dmypy.json

|

| 138 |

+

dmypy.json

|

| 139 |

+

|

| 140 |

+

# Pyre type checker

|

| 141 |

+

.pyre/

|

app.py

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

'''

|

| 2 |

+

Author: Egrt

|

| 3 |

+

Date: 2022-01-13 13:34:10

|

| 4 |

+

LastEditors: Egrt

|

| 5 |

+

LastEditTime: 2022-10-17 10:23:29

|

| 6 |

+

FilePath: \MaskGAN\app.py

|

| 7 |

+

'''

|

| 8 |

+

from cyclegan import CYCLEGAN

|

| 9 |

+

import gradio as gr

|

| 10 |

+

import os

|

| 11 |

+

cyclegan = CYCLEGAN()

|

| 12 |

+

|

| 13 |

+

# --------模型推理---------- #

|

| 14 |

+

'''

|

| 15 |

+

description:

|

| 16 |

+

param {*} img 戴眼镜的人脸图片 Image

|

| 17 |

+

return {*} r_image 去遮挡的人脸图片 Image

|

| 18 |

+

'''

|

| 19 |

+

def inference(img):

|

| 20 |

+

r_image = cyclegan.detect_image(img)

|

| 21 |

+

return r_image

|

| 22 |

+

|

| 23 |

+

# --------网页信息---------- #

|

| 24 |

+

title = "融合无监督的戴眼镜遮挡人脸重建"

|

| 25 |

+

description = "使用生成对抗网络对戴眼镜遮挡人脸重建,能够有效地去除眼镜遮挡。 @西南科技大学智能控制与图像处理研究室"

|

| 26 |

+

article = "<p style='text-align: center'>DeMaskGAN: Face Restoration Using Swin Transformer </p>"

|

| 27 |

+

example_img_dir = 'img'

|

| 28 |

+

example_img_name = os.listdir(example_img_dir)

|

| 29 |

+

examples=[os.path.join(example_img_dir, image_path) for image_path in example_img_name if image_path.endswith(('.jpg','.jpeg'))]

|

| 30 |

+

gr.Interface(

|

| 31 |

+

inference,

|

| 32 |

+

gr.inputs.Image(type="pil", label="Input"),

|

| 33 |

+

gr.outputs.Image(type="pil", label="Output"),

|

| 34 |

+

title=title,

|

| 35 |

+

description=description,

|

| 36 |

+

article=article,

|

| 37 |

+

examples=examples

|

| 38 |

+

).launch()

|

cyclegan.py

ADDED

|

@@ -0,0 +1,116 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import cv2

|

| 2 |

+

import numpy as np

|

| 3 |

+

import torch

|

| 4 |

+

from PIL import Image

|

| 5 |

+

from torch import nn

|

| 6 |

+

|

| 7 |

+

from nets.cyclegan import Generator

|

| 8 |

+

from utils.utils import (cvtColor, postprocess_output, preprocess_input,

|

| 9 |

+

resize_image, show_config)

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

class CYCLEGAN(object):

|

| 13 |

+

_defaults = {

|

| 14 |

+

#-----------------------------------------------#

|

| 15 |

+

# model_path指向logs文件夹下的权值文件

|

| 16 |

+

#-----------------------------------------------#

|

| 17 |

+

"model_path" : 'model_data/G_model_B2A_last_epoch_weights.pth',

|

| 18 |

+

#-----------------------------------------------#

|

| 19 |

+

# 输入图像大小的设置

|

| 20 |

+

#-----------------------------------------------#

|

| 21 |

+

"input_shape" : [112, 112],

|

| 22 |

+

#-------------------------------#

|

| 23 |

+

# 是否进行不失真的resize

|

| 24 |

+

#-------------------------------#

|

| 25 |

+

"letterbox_image" : True,

|

| 26 |

+

#-------------------------------#

|

| 27 |

+

# 是否使用Cuda

|

| 28 |

+

# 没有GPU可以设置成False

|

| 29 |

+

#-------------------------------#

|

| 30 |

+

"cuda" : True,

|

| 31 |

+

}

|

| 32 |

+

|

| 33 |

+

#---------------------------------------------------#

|

| 34 |

+

# 初始化CYCLEGAN

|

| 35 |

+

#---------------------------------------------------#

|

| 36 |

+

def __init__(self, **kwargs):

|

| 37 |

+

self.__dict__.update(self._defaults)

|

| 38 |

+

for name, value in kwargs.items():

|

| 39 |

+

setattr(self, name, value)

|

| 40 |

+

self._defaults[name] = value

|

| 41 |

+

self.generate()

|

| 42 |

+

|

| 43 |

+

show_config(**self._defaults)

|

| 44 |

+

|

| 45 |

+

def generate(self):

|

| 46 |

+

#----------------------------------------#

|

| 47 |

+

# 创建GAN模型

|

| 48 |

+

#----------------------------------------#

|

| 49 |

+

self.net = Generator(upscale=1, img_size=tuple(self.input_shape),

|

| 50 |

+

window_size=7, img_range=1., depths=[3, 3, 3, 3],

|

| 51 |

+

embed_dim=60, num_heads=[3, 3, 3, 3], mlp_ratio=1, upsampler='1conv').eval()

|

| 52 |

+

|

| 53 |

+

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

|

| 54 |

+

self.net.load_state_dict(torch.load(self.model_path, map_location=device))

|

| 55 |

+

self.net = self.net.eval()

|

| 56 |

+

print('{} model loaded.'.format(self.model_path))

|

| 57 |

+

|

| 58 |

+

if self.cuda:

|

| 59 |

+

self.net = nn.DataParallel(self.net)

|

| 60 |

+

self.net = self.net.cuda()

|

| 61 |

+

|

| 62 |

+

#---------------------------------------------------#

|

| 63 |

+

# 生成1x1的图片

|

| 64 |

+

#---------------------------------------------------#

|

| 65 |

+

def detect_image(self, image):

|

| 66 |

+

#---------------------------------------------------------#

|

| 67 |

+

# 在这里将图像转换成RGB图像,防止灰度图在预测时报错。

|

| 68 |

+

# 代码仅仅支持RGB图像的预测,所有其它类型的图像都会转化成RGB

|

| 69 |

+

#---------------------------------------------------------#

|

| 70 |

+

image = cvtColor(image)

|

| 71 |

+

#---------------------------------------------------#

|

| 72 |

+

# 获得高宽

|

| 73 |

+

#---------------------------------------------------#

|

| 74 |

+

orininal_h = np.array(image).shape[0]

|

| 75 |

+

orininal_w = np.array(image).shape[1]

|

| 76 |

+

#---------------------------------------------------------#

|

| 77 |

+

# 给图像增加灰条,实现不失真的resize

|

| 78 |

+

# 也可以直接resize进行识别

|

| 79 |

+

#---------------------------------------------------------#

|

| 80 |

+

image_data, nw, nh = resize_image(image, (self.input_shape[1],self.input_shape[0]), self.letterbox_image)

|

| 81 |

+

#---------------------------------------------------------#

|

| 82 |

+

# 添加上batch_size维度

|

| 83 |

+

#---------------------------------------------------------#

|

| 84 |

+

image_data = np.expand_dims(np.transpose(preprocess_input(np.array(image_data, dtype='float32')), (2, 0, 1)), 0)

|

| 85 |

+

|

| 86 |

+

with torch.no_grad():

|

| 87 |

+

images = torch.from_numpy(image_data)

|

| 88 |

+

if self.cuda:

|

| 89 |

+

images = images.cuda()

|

| 90 |

+

|

| 91 |

+

#---------------------------------------------------#

|

| 92 |

+

# 图片传入网络进行预测

|

| 93 |

+

#---------------------------------------------------#

|

| 94 |

+

pr = self.net(images)[0]

|

| 95 |

+

#---------------------------------------------------#

|

| 96 |

+

# 转为numpy

|

| 97 |

+

#---------------------------------------------------#

|

| 98 |

+

pr = pr.permute(1, 2, 0).cpu().numpy()

|

| 99 |

+

|

| 100 |

+

#--------------------------------------#

|

| 101 |

+

# 将灰条部分截取掉

|

| 102 |

+

#--------------------------------------#

|

| 103 |

+

if nw is not None:

|

| 104 |

+

pr = pr[int((self.input_shape[0] - nh) // 2) : int((self.input_shape[0] - nh) // 2 + nh), \

|

| 105 |

+

int((self.input_shape[1] - nw) // 2) : int((self.input_shape[1] - nw) // 2 + nw)]

|

| 106 |

+

|

| 107 |

+

#---------------------------------------------------#

|

| 108 |

+

# 进行图片的resize

|

| 109 |

+

#---------------------------------------------------#

|

| 110 |

+

pr = cv2.resize(pr, (orininal_w, orininal_h), interpolation = cv2.INTER_LINEAR)

|

| 111 |

+

|

| 112 |

+

image = postprocess_output(pr)

|

| 113 |

+

image = np.clip(image, 0, 255)

|

| 114 |

+

image = Image.fromarray(np.uint8(image))

|

| 115 |

+

|

| 116 |

+

return image

|

img/7134850@N05_identity_2@7720949260_0.jpg

ADDED

|

img/7134850@N05_identity_2@7720963358_0.jpg

ADDED

|

img/7134850@N05_identity_2@8978938957_3.jpg

ADDED

|

img/7134850@N05_identity_2@8980174892_1.jpg

ADDED

|

img/7154980@N03_identity_0@2379147786_0.jpg

ADDED

|

img/epoch_14_results.png

ADDED

|

nets/__init__.py

ADDED

|

File without changes

|

nets/cyclegan.py

ADDED

|

@@ -0,0 +1,923 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# -----------------------------------------------------------------------------------

|

| 2 |

+

# SwinIR: Image Restoration Using Swin Transformer, https://arxiv.org/abs/2108.10257

|

| 3 |

+

# Originally Written by Ze Liu, Modified by Jingyun Liang.

|

| 4 |

+

# -----------------------------------------------------------------------------------

|

| 5 |

+

|

| 6 |

+

import math

|

| 7 |

+

|

| 8 |

+

import torch

|

| 9 |

+

import torch.nn as nn

|

| 10 |

+

import torch.nn.functional as F

|

| 11 |

+

import torch.utils.checkpoint as checkpoint

|

| 12 |

+

from timm.models.layers import DropPath, to_2tuple, trunc_normal_

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class Mlp(nn.Module):

|

| 16 |

+

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

|

| 17 |

+

super().__init__()

|

| 18 |

+

out_features = out_features or in_features

|

| 19 |

+

hidden_features = hidden_features or in_features

|

| 20 |

+

self.fc1 = nn.Linear(in_features, hidden_features)

|

| 21 |

+

self.act = act_layer()

|

| 22 |

+

self.fc2 = nn.Linear(hidden_features, out_features)

|

| 23 |

+

self.drop = nn.Dropout(drop)

|

| 24 |

+

|

| 25 |

+

def forward(self, x):

|

| 26 |

+

x = self.fc1(x)

|

| 27 |

+

x = self.act(x)

|

| 28 |

+

x = self.drop(x)

|

| 29 |

+

x = self.fc2(x)

|

| 30 |

+

x = self.drop(x)

|

| 31 |

+

return x

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

def window_partition(x, window_size):

|

| 35 |

+

"""

|

| 36 |

+

Args:

|

| 37 |

+

x: (B, H, W, C)

|

| 38 |

+

window_size (int): window size

|

| 39 |

+

|

| 40 |

+

Returns:

|

| 41 |

+

windows: (num_windows*B, window_size, window_size, C)

|

| 42 |

+

"""

|

| 43 |

+

B, H, W, C = x.shape

|

| 44 |

+

x = x.view(B, H // window_size, window_size, W // window_size, window_size, C)

|

| 45 |

+

windows = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(-1, window_size, window_size, C)

|

| 46 |

+

return windows

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

def window_reverse(windows, window_size, H, W):

|

| 50 |

+

"""

|

| 51 |

+

Args:

|

| 52 |

+

windows: (num_windows*B, window_size, window_size, C)

|

| 53 |

+

window_size (int): Window size

|

| 54 |

+

H (int): Height of image

|

| 55 |

+

W (int): Width of image

|

| 56 |

+

|

| 57 |

+

Returns:

|

| 58 |

+

x: (B, H, W, C)

|

| 59 |

+

"""

|

| 60 |

+

B = int(windows.shape[0] / (H * W / window_size / window_size))

|

| 61 |

+

x = windows.view(B, H // window_size, W // window_size, window_size, window_size, -1)

|

| 62 |

+

x = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(B, H, W, -1)

|

| 63 |

+

return x

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

class WindowAttention(nn.Module):

|

| 67 |

+

r""" Window based multi-head self attention (W-MSA) module with relative position bias.

|

| 68 |

+

It supports both of shifted and non-shifted window.

|

| 69 |

+

|

| 70 |

+

Args:

|

| 71 |

+

dim (int): Number of input channels.

|

| 72 |

+

window_size (tuple[int]): The height and width of the window.

|

| 73 |

+

num_heads (int): Number of attention heads.

|

| 74 |

+

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

|

| 75 |

+

qk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set

|

| 76 |

+

attn_drop (float, optional): Dropout ratio of attention weight. Default: 0.0

|

| 77 |

+

proj_drop (float, optional): Dropout ratio of output. Default: 0.0

|

| 78 |

+

"""

|

| 79 |

+

|

| 80 |

+

def __init__(self, dim, window_size, num_heads, qkv_bias=True, qk_scale=None, attn_drop=0., proj_drop=0.):

|

| 81 |

+

|

| 82 |

+

super().__init__()

|

| 83 |

+

self.dim = dim

|

| 84 |

+

self.window_size = window_size # Wh, Ww

|

| 85 |

+

self.num_heads = num_heads

|

| 86 |

+

head_dim = dim // num_heads

|

| 87 |

+

self.scale = qk_scale or head_dim ** -0.5

|

| 88 |

+

|

| 89 |

+

# define a parameter table of relative position bias

|

| 90 |

+

self.relative_position_bias_table = nn.Parameter(

|

| 91 |

+

torch.zeros((2 * window_size[0] - 1) * (2 * window_size[1] - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nH

|

| 92 |

+

|

| 93 |

+

# get pair-wise relative position index for each token inside the window

|

| 94 |

+

coords_h = torch.arange(self.window_size[0])

|

| 95 |

+

coords_w = torch.arange(self.window_size[1])

|

| 96 |

+

coords = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, Wh, Ww

|

| 97 |

+

coords_flatten = torch.flatten(coords, 1) # 2, Wh*Ww

|

| 98 |

+

relative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # 2, Wh*Ww, Wh*Ww

|

| 99 |

+

relative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2

|

| 100 |

+

relative_coords[:, :, 0] += self.window_size[0] - 1 # shift to start from 0

|

| 101 |

+

relative_coords[:, :, 1] += self.window_size[1] - 1

|

| 102 |

+

relative_coords[:, :, 0] *= 2 * self.window_size[1] - 1

|

| 103 |

+

relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Ww

|

| 104 |

+

self.register_buffer("relative_position_index", relative_position_index)

|

| 105 |

+

|

| 106 |

+

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

|

| 107 |

+

self.attn_drop = nn.Dropout(attn_drop)

|

| 108 |

+

self.proj = nn.Linear(dim, dim)

|

| 109 |

+

|

| 110 |

+

self.proj_drop = nn.Dropout(proj_drop)

|

| 111 |

+

|

| 112 |

+

trunc_normal_(self.relative_position_bias_table, std=.02)

|

| 113 |

+

self.softmax = nn.Softmax(dim=-1)

|

| 114 |

+

|

| 115 |

+

def forward(self, x, mask=None):

|

| 116 |

+

"""

|

| 117 |

+

Args:

|

| 118 |

+

x: input features with shape of (num_windows*B, N, C)

|

| 119 |

+

mask: (0/-inf) mask with shape of (num_windows, Wh*Ww, Wh*Ww) or None

|

| 120 |

+

"""

|

| 121 |

+

B_, N, C = x.shape

|

| 122 |

+

qkv = self.qkv(x).reshape(B_, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

|

| 123 |

+

q, k, v = qkv[0], qkv[1], qkv[2] # make torchscript happy (cannot use tensor as tuple)

|

| 124 |

+

|

| 125 |

+

q = q * self.scale

|

| 126 |

+

attn = (q @ k.transpose(-2, -1))

|

| 127 |

+

|

| 128 |

+

relative_position_bias = self.relative_position_bias_table[self.relative_position_index.view(-1)].view(

|

| 129 |

+

self.window_size[0] * self.window_size[1], self.window_size[0] * self.window_size[1], -1) # Wh*Ww,Wh*Ww,nH

|

| 130 |

+

relative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, Wh*Ww, Wh*Ww

|

| 131 |

+

attn = attn + relative_position_bias.unsqueeze(0)

|

| 132 |

+

|

| 133 |

+

if mask is not None:

|

| 134 |

+

nW = mask.shape[0]

|

| 135 |

+

attn = attn.view(B_ // nW, nW, self.num_heads, N, N) + mask.unsqueeze(1).unsqueeze(0)

|

| 136 |

+

attn = attn.view(-1, self.num_heads, N, N)

|

| 137 |

+

attn = self.softmax(attn)

|

| 138 |

+

else:

|

| 139 |

+

attn = self.softmax(attn)

|

| 140 |

+

|

| 141 |

+

attn = self.attn_drop(attn)

|

| 142 |

+

|

| 143 |

+

x = (attn @ v).transpose(1, 2).reshape(B_, N, C)

|

| 144 |

+

x = self.proj(x)

|

| 145 |

+

x = self.proj_drop(x)

|

| 146 |

+

return x

|

| 147 |

+

|

| 148 |

+

def extra_repr(self) -> str:

|

| 149 |

+

return f'dim={self.dim}, window_size={self.window_size}, num_heads={self.num_heads}'

|

| 150 |

+

|

| 151 |

+

def flops(self, N):

|

| 152 |

+

# calculate flops for 1 window with token length of N

|

| 153 |

+

flops = 0

|

| 154 |

+

# qkv = self.qkv(x)

|

| 155 |

+

flops += N * self.dim * 3 * self.dim

|

| 156 |

+

# attn = (q @ k.transpose(-2, -1))

|

| 157 |

+

flops += self.num_heads * N * (self.dim // self.num_heads) * N

|

| 158 |

+

# x = (attn @ v)

|

| 159 |

+

flops += self.num_heads * N * N * (self.dim // self.num_heads)

|

| 160 |

+

# x = self.proj(x)

|

| 161 |

+

flops += N * self.dim * self.dim

|

| 162 |

+

return flops

|

| 163 |

+

|

| 164 |

+

|

| 165 |

+

class SwinTransformerBlock(nn.Module):

|

| 166 |

+

r""" Swin Transformer Block.

|

| 167 |

+

|

| 168 |

+

Args:

|

| 169 |

+

dim (int): Number of input channels.

|

| 170 |

+

input_resolution (tuple[int]): Input resulotion.

|

| 171 |

+

num_heads (int): Number of attention heads.

|

| 172 |

+

window_size (int): Window size.

|

| 173 |

+

shift_size (int): Shift size for SW-MSA.

|

| 174 |

+

mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

|

| 175 |

+

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

|

| 176 |

+

qk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.

|

| 177 |

+

drop (float, optional): Dropout rate. Default: 0.0

|

| 178 |

+

attn_drop (float, optional): Attention dropout rate. Default: 0.0

|

| 179 |

+

drop_path (float, optional): Stochastic depth rate. Default: 0.0

|

| 180 |

+

act_layer (nn.Module, optional): Activation layer. Default: nn.GELU

|

| 181 |

+

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

|

| 182 |

+

"""

|

| 183 |

+

|

| 184 |

+

def __init__(self, dim, input_resolution, num_heads, window_size=7, shift_size=0,

|

| 185 |

+

mlp_ratio=4., qkv_bias=True, qk_scale=None, drop=0., attn_drop=0., drop_path=0.,

|

| 186 |

+

act_layer=nn.GELU, norm_layer=nn.LayerNorm):

|

| 187 |

+

super().__init__()

|

| 188 |

+

self.dim = dim

|

| 189 |

+

self.input_resolution = input_resolution

|

| 190 |

+

self.num_heads = num_heads

|

| 191 |

+

self.window_size = window_size

|

| 192 |

+

self.shift_size = shift_size

|

| 193 |

+

self.mlp_ratio = mlp_ratio

|

| 194 |

+

if min(self.input_resolution) <= self.window_size:

|

| 195 |

+

# if window size is larger than input resolution, we don't partition windows

|

| 196 |

+

self.shift_size = 0

|

| 197 |

+

self.window_size = min(self.input_resolution)

|

| 198 |

+

assert 0 <= self.shift_size < self.window_size, "shift_size must in 0-window_size"

|

| 199 |

+

|

| 200 |

+

self.norm1 = norm_layer(dim)

|

| 201 |

+

self.attn = WindowAttention(

|

| 202 |

+

dim, window_size=to_2tuple(self.window_size), num_heads=num_heads,

|

| 203 |

+

qkv_bias=qkv_bias, qk_scale=qk_scale, attn_drop=attn_drop, proj_drop=drop)

|

| 204 |

+

|

| 205 |

+

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

|

| 206 |

+

self.norm2 = norm_layer(dim)

|

| 207 |

+

mlp_hidden_dim = int(dim * mlp_ratio)

|

| 208 |

+

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)

|

| 209 |

+

|

| 210 |

+

if self.shift_size > 0:

|

| 211 |

+

attn_mask = self.calculate_mask(self.input_resolution)

|

| 212 |

+

else:

|

| 213 |

+

attn_mask = None

|

| 214 |

+

|

| 215 |

+

self.register_buffer("attn_mask", attn_mask)

|

| 216 |

+

|

| 217 |

+

def calculate_mask(self, x_size):

|

| 218 |

+

# calculate attention mask for SW-MSA

|

| 219 |

+

H, W = x_size

|

| 220 |

+

img_mask = torch.zeros((1, H, W, 1)) # 1 H W 1

|

| 221 |

+

h_slices = (slice(0, -self.window_size),

|

| 222 |

+

slice(-self.window_size, -self.shift_size),

|

| 223 |

+

slice(-self.shift_size, None))

|

| 224 |

+

w_slices = (slice(0, -self.window_size),

|

| 225 |

+

slice(-self.window_size, -self.shift_size),

|

| 226 |

+

slice(-self.shift_size, None))

|

| 227 |

+

cnt = 0

|

| 228 |

+

for h in h_slices:

|

| 229 |

+

for w in w_slices:

|

| 230 |

+

img_mask[:, h, w, :] = cnt

|

| 231 |

+

cnt += 1

|

| 232 |

+

|

| 233 |

+

mask_windows = window_partition(img_mask, self.window_size) # nW, window_size, window_size, 1

|

| 234 |

+

mask_windows = mask_windows.view(-1, self.window_size * self.window_size)

|

| 235 |

+

attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2)

|

| 236 |

+

attn_mask = attn_mask.masked_fill(attn_mask != 0, float(-100.0)).masked_fill(attn_mask == 0, float(0.0))

|

| 237 |

+

|

| 238 |

+

return attn_mask

|

| 239 |

+

|

| 240 |

+

def forward(self, x, x_size):

|

| 241 |

+

H, W = x_size

|

| 242 |

+

B, L, C = x.shape

|

| 243 |

+

# assert L == H * W, "input feature has wrong size"

|

| 244 |

+

|

| 245 |

+

shortcut = x

|

| 246 |

+

x = self.norm1(x)

|

| 247 |

+

x = x.view(B, H, W, C)

|

| 248 |

+

|

| 249 |

+

# cyclic shift

|

| 250 |

+

if self.shift_size > 0:

|

| 251 |

+

shifted_x = torch.roll(x, shifts=(-self.shift_size, -self.shift_size), dims=(1, 2))

|

| 252 |

+

else:

|

| 253 |

+

shifted_x = x

|

| 254 |

+

|

| 255 |

+

# partition windows

|

| 256 |

+

x_windows = window_partition(shifted_x, self.window_size) # nW*B, window_size, window_size, C

|

| 257 |

+

x_windows = x_windows.view(-1, self.window_size * self.window_size, C) # nW*B, window_size*window_size, C

|

| 258 |

+

|

| 259 |

+

# W-MSA/SW-MSA (to be compatible for testing on images whose shapes are the multiple of window size

|

| 260 |

+

if self.input_resolution == x_size:

|

| 261 |

+

attn_windows = self.attn(x_windows, mask=self.attn_mask) # nW*B, window_size*window_size, C

|

| 262 |

+

else:

|

| 263 |

+

attn_windows = self.attn(x_windows, mask=self.calculate_mask(x_size).to(x.device))

|

| 264 |

+

|

| 265 |

+

# merge windows

|

| 266 |

+

attn_windows = attn_windows.view(-1, self.window_size, self.window_size, C)

|

| 267 |

+

shifted_x = window_reverse(attn_windows, self.window_size, H, W) # B H' W' C

|

| 268 |

+

|

| 269 |

+

# reverse cyclic shift

|

| 270 |

+

if self.shift_size > 0:

|

| 271 |

+

x = torch.roll(shifted_x, shifts=(self.shift_size, self.shift_size), dims=(1, 2))

|

| 272 |

+

else:

|

| 273 |

+

x = shifted_x

|

| 274 |

+

x = x.view(B, H * W, C)

|

| 275 |

+

|

| 276 |

+

# FFN

|

| 277 |

+

x = shortcut + self.drop_path(x)

|

| 278 |

+

x = x + self.drop_path(self.mlp(self.norm2(x)))

|

| 279 |

+

|

| 280 |

+

return x

|

| 281 |

+

|

| 282 |

+

def extra_repr(self) -> str:

|

| 283 |

+

return f"dim={self.dim}, input_resolution={self.input_resolution}, num_heads={self.num_heads}, " \

|

| 284 |

+

f"window_size={self.window_size}, shift_size={self.shift_size}, mlp_ratio={self.mlp_ratio}"

|

| 285 |

+

|

| 286 |

+

def flops(self):

|

| 287 |

+

flops = 0

|

| 288 |

+

H, W = self.input_resolution

|

| 289 |

+

# norm1

|

| 290 |

+

flops += self.dim * H * W

|

| 291 |

+

# W-MSA/SW-MSA

|

| 292 |

+

nW = H * W / self.window_size / self.window_size

|

| 293 |

+

flops += nW * self.attn.flops(self.window_size * self.window_size)

|

| 294 |

+

# mlp

|

| 295 |

+

flops += 2 * H * W * self.dim * self.dim * self.mlp_ratio

|

| 296 |

+

# norm2

|

| 297 |

+

flops += self.dim * H * W

|

| 298 |

+

return flops

|

| 299 |

+

|

| 300 |

+

|

| 301 |

+

class PatchMerging(nn.Module):

|

| 302 |

+

r""" Patch Merging Layer.

|

| 303 |

+

|

| 304 |

+

Args:

|

| 305 |

+

input_resolution (tuple[int]): Resolution of input feature.

|

| 306 |

+

dim (int): Number of input channels.

|

| 307 |

+

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

|

| 308 |

+

"""

|

| 309 |

+

|

| 310 |

+

def __init__(self, input_resolution, dim, norm_layer=nn.LayerNorm):

|

| 311 |

+

super().__init__()

|

| 312 |

+

self.input_resolution = input_resolution

|

| 313 |

+

self.dim = dim

|

| 314 |

+

self.reduction = nn.Linear(4 * dim, 2 * dim, bias=False)

|

| 315 |

+

self.norm = norm_layer(4 * dim)

|

| 316 |

+

|

| 317 |

+

def forward(self, x):

|

| 318 |

+

"""

|

| 319 |

+

x: B, H*W, C

|

| 320 |

+

"""

|

| 321 |

+

H, W = self.input_resolution

|

| 322 |

+

B, L, C = x.shape

|

| 323 |

+

assert L == H * W, "input feature has wrong size"

|

| 324 |

+

assert H % 2 == 0 and W % 2 == 0, f"x size ({H}*{W}) are not even."

|

| 325 |

+

|

| 326 |

+

x = x.view(B, H, W, C)

|

| 327 |

+

|

| 328 |

+

x0 = x[:, 0::2, 0::2, :] # B H/2 W/2 C

|

| 329 |

+

x1 = x[:, 1::2, 0::2, :] # B H/2 W/2 C

|

| 330 |

+

x2 = x[:, 0::2, 1::2, :] # B H/2 W/2 C

|

| 331 |

+

x3 = x[:, 1::2, 1::2, :] # B H/2 W/2 C

|

| 332 |

+

x = torch.cat([x0, x1, x2, x3], -1) # B H/2 W/2 4*C

|

| 333 |

+

x = x.view(B, -1, 4 * C) # B H/2*W/2 4*C

|

| 334 |

+

|

| 335 |

+

x = self.norm(x)

|

| 336 |

+

x = self.reduction(x)

|

| 337 |

+

|

| 338 |

+

return x

|

| 339 |

+

|

| 340 |

+

def extra_repr(self) -> str:

|

| 341 |

+

return f"input_resolution={self.input_resolution}, dim={self.dim}"

|

| 342 |

+

|

| 343 |

+

def flops(self):

|

| 344 |

+

H, W = self.input_resolution

|

| 345 |

+

flops = H * W * self.dim

|

| 346 |

+

flops += (H // 2) * (W // 2) * 4 * self.dim * 2 * self.dim

|

| 347 |

+

return flops

|

| 348 |

+

|

| 349 |

+

|

| 350 |

+

class BasicLayer(nn.Module):

|

| 351 |

+

""" A basic Swin Transformer layer for one stage.

|

| 352 |

+

|

| 353 |

+

Args:

|

| 354 |

+

dim (int): Number of input channels.

|

| 355 |

+

input_resolution (tuple[int]): Input resolution.

|

| 356 |

+

depth (int): Number of blocks.

|

| 357 |

+

num_heads (int): Number of attention heads.

|

| 358 |

+

window_size (int): Local window size.

|

| 359 |

+

mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

|

| 360 |

+

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

|

| 361 |

+

qk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set.

|

| 362 |

+

drop (float, optional): Dropout rate. Default: 0.0

|

| 363 |

+

attn_drop (float, optional): Attention dropout rate. Default: 0.0

|

| 364 |

+

drop_path (float | tuple[float], optional): Stochastic depth rate. Default: 0.0

|

| 365 |

+

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

|

| 366 |

+

downsample (nn.Module | None, optional): Downsample layer at the end of the layer. Default: None

|

| 367 |

+

use_checkpoint (bool): Whether to use checkpointing to save memory. Default: False.

|

| 368 |

+

"""

|

| 369 |

+

|

| 370 |

+

def __init__(self, dim, input_resolution, depth, num_heads, window_size,

|

| 371 |

+

mlp_ratio=4., qkv_bias=True, qk_scale=None, drop=0., attn_drop=0.,

|

| 372 |

+

drop_path=0., norm_layer=nn.LayerNorm, downsample=None, use_checkpoint=False):

|

| 373 |

+

|

| 374 |

+

super().__init__()

|

| 375 |

+

self.dim = dim

|

| 376 |

+

self.input_resolution = input_resolution

|

| 377 |

+

self.depth = depth

|

| 378 |

+

self.use_checkpoint = use_checkpoint

|

| 379 |

+

|

| 380 |

+

# build blocks

|

| 381 |

+

self.blocks = nn.ModuleList([

|

| 382 |

+

SwinTransformerBlock(dim=dim, input_resolution=input_resolution,

|

| 383 |

+

num_heads=num_heads, window_size=window_size,

|

| 384 |

+

shift_size=0 if (i % 2 == 0) else window_size // 2,

|

| 385 |

+

mlp_ratio=mlp_ratio,

|

| 386 |

+

qkv_bias=qkv_bias, qk_scale=qk_scale,

|

| 387 |

+

drop=drop, attn_drop=attn_drop,

|

| 388 |

+

drop_path=drop_path[i] if isinstance(drop_path, list) else drop_path,

|

| 389 |

+

norm_layer=norm_layer)

|

| 390 |

+

for i in range(depth)])

|

| 391 |

+

|

| 392 |

+

# patch merging layer