Dragonfly-Med Model Card

Note: Users are permitted to use this model in accordance with the Llama 3.1 Community License Agreement. Additionally, due to the licensing restrictions of the dataset used to train this model, which prohibits commercial use, the Dragonfly-Med model is restricted to non-commercial use only.

Model Details

Dragonfly-Med is a multimodal biomedical visual-language model, trained by instruction tuning on Llama 3.1.

- Developed by: Together AI

- Model type: An autoregressive visual-language model based on the transformer architecture

- License: Llama 3.1 Community License Agreement

- Finetuned from model: Llama 3.1

Model Sources

- Repository: https://github.com/togethercomputer/Dragonfly

- Paper: https://arxiv.org/abs/2406.00977

Uses

The primary use of Dragonfly-Med is research on large visual-language models. It is primarily intended for researchers and hobbyists in natural language processing, machine learning, and artificial intelligence.

How to Get Started with the Model

💿 Installation

Create a conda environment and install necessary packages

conda env create -f environment.yml

conda activate dragonfly_env

Install flash attention

pip install flash-attn --no-build-isolation

As a final step, please run the following command.

pip install --upgrade -e .

🧠 Inference

If you have successfully completed the installation process, then you should be able to follow the steps below.

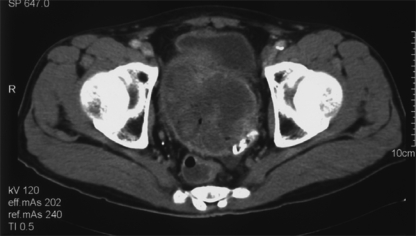

Question: Provide a brief description of the given image.

Load necessary packages

import torch

from PIL import Image

from transformers import AutoProcessor, AutoTokenizer

from dragonfly.models.modeling_dragonfly import DragonflyForCausalLM

from dragonfly.models.processing_dragonfly import DragonflyProcessor

from pipeline.train.train_utils import random_seed

Instantiate the tokenizer, processor, and model.

device = torch.device("cuda:0")

tokenizer = AutoTokenizer.from_pretrained("togethercomputer/Llama-3.1-8B-Dragonfly-Med-v2")

clip_processor = AutoProcessor.from_pretrained("openai/clip-vit-large-patch14-336")

image_processor = clip_processor.image_processor

processor = DragonflyProcessor(image_processor=image_processor, tokenizer=tokenizer, image_encoding_style="llava-hd")

model = DragonflyForCausalLM.from_pretrained("togethercomputer/Llama-3.1-8B-Dragonfly-Med-v2")

model = model.to(torch.bfloat16)

model = model.to(device)

Now, lets load the image and process them.

image = Image.open("ROCO_04197.jpg")

image = image.convert("RGB")

images = [image]

# images = [None] # if you do not want to pass any images

text_prompt = "<|start_header_id|>user<|end_header_id|>\n\nProvide a brief description of the given image.<|eot_id|><|start_header_id|>assistant<|end_header_id|>\n\n"

inputs = processor(text=[text_prompt], images=images, max_length=1024, return_tensors="pt", is_generate=True)

inputs = inputs.to(device)

Finally, let us generate the responses from the model

temperature = 0

with torch.inference_mode():

generation_output = model.generate(**inputs, max_new_tokens=1024, eos_token_id=tokenizer.encode("<|eot_id|>"), do_sample=temperature > 0, temperature=temperature, use_cache=True)

generation_text = processor.batch_decode(generation_output, skip_special_tokens=False)

An example response.

Computed tomography scan showing a large heterogenous mass in the pelvis<|eot_id|>

Training Details

See more details in the "Implementation" section of our paper.

Evaluation

See more details in the "Results" section of our paper.

🏆 Credits

We would like to acknowledge the following resources that were instrumental in the development of Dragonfly:

- Meta Llama 3.1: We utilized the Llama 3 model as our foundational language model.

- CLIP: Our vision backbone is CLIP model from OpenAI.

- Our codebase is built upon the following two codebases:

📚 BibTeX

@misc{thapa2024dragonfly,

title={Dragonfly: Multi-Resolution Zoom-In Encoding Enhances Vision-Language Models},

author={Rahul Thapa and Kezhen Chen and Ian Covert and Rahul Chalamala and Ben Athiwaratkun and Shuaiwen Leon Song and James Zou},

year={2024},

eprint={2406.00977},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

Model Card Authors

Rahul Thapa, Kezhen Chen, Rahul Chalamala

Model Card Contact

Rahul Thapa (rahulthapa@together.ai), Kezhen Chen (kezhen@together.ai)

- Downloads last month

- 48