Model Card for Llama3-8B-RMU

This card contains the RMU model Llama3-8B-RMU used in LLM Defenses Are Not Robust to Multi-Turn Human Jailbreaks.

Paper Abstract

Recent large language model (LLM) defenses have greatly improved models’ ability to refuse harmful queries, even when adversarially attacked. However, LLM defenses are primarily evaluated against automated adversarial attacks in a single turn of conversation, an insufficient threat model for real- world malicious use. We demonstrate that multi-turn human jailbreaks uncover significant vulnerabilities, exceeding 70% attack success rate (ASR) on HarmBench against defenses that report single-digit ASRs with automated single-turn attacks. Human jailbreaks also reveal vulnerabilities in machine unlearning defenses, successfully recovering dual-use biosecurity knowledge from unlearned models. We compile these results into Multi-Turn Human Jailbreaks (MHJ), a dataset of 2,912 prompts across 537 multi-turn jailbreaks. We publicly release MHJ alongside a compendium of jailbreak tactics developed across dozens of commercial red teaming engagements, supporting research towards stronger LLM defenses.

RMU (Representation Misdirection for Unlearning) Model

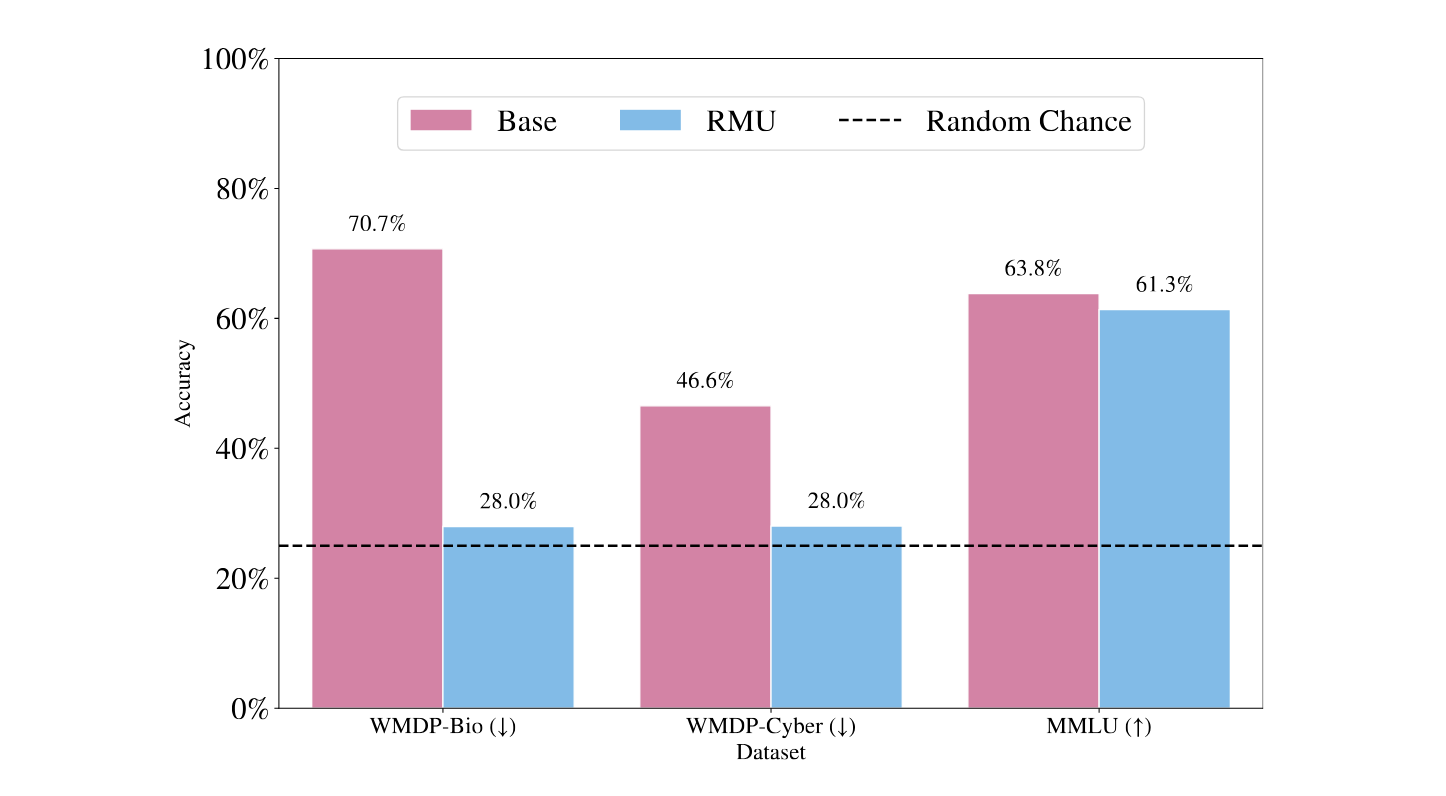

For the WMDP-Bio evaluation, we employ the RMU unlearning method. The original paper applies RMU upon the zephyr-7b-beta model, but to standardize defenses and use a more performant model, we apply RMU upon llama-3-8b-instruct, the same base model as all other defenses in this paper. We conduct a hyperparameter search upon batches ∈ {200, 400}, c ∈ {5, 20, 50, 200}, α ∈ {200, 500, 2000, 5000}, lr ∈ {2 × 10−5, 5 × 10−5, 2 × 10−4}. We end up selecting batches = 400, c = 50, α = 5000, lr = 2 × 10−4, and retain the hyperparameters layer_ids = [5, 6, 7] and param_ids = [6] from Li et al. We validate our results in Figure 8, demonstrating reduction in WMDP performance but retention of general capabilities (MMLU)

The following picture shows LLaMA-3-8B-instruct multiple choice benchmark accuracies before and after RMU.

Model Use

import transformers

import torch

model_id = "ScaleAI/mhj-llama3-8b-rmu"

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

model_kwargs={"torch_dtype": torch.bfloat16},

device_map="auto",

)

Bibtex Citation

If you use this model, please consider to cite

@misc{li2024llmdefensesrobustmultiturn,

title={LLM Defenses Are Not Robust to Multi-Turn Human Jailbreaks Yet},

author={Nathaniel Li and Ziwen Han and Ian Steneker and Willow Primack and Riley Goodside and Hugh Zhang and Zifan Wang and Cristina Menghini and Summer Yue},

year={2024},

eprint={2408.15221},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2408.15221},

}

- Downloads last month

- 7

Model tree for ScaleAI/mhj-llama3-8b-rmu

Base model

meta-llama/Meta-Llama-3-8B-Instruct