Spaces:

Running

title: Scientific Document Insights Q/A

emoji: 📝

colorFrom: yellow

colorTo: pink

sdk: streamlit

sdk_version: 1.36.0

app_file: streamlit_app.py

pinned: false

license: apache-2.0

app_port: 8501

DocumentIQA: Scientific Document Insights Q/A

Work in progress :construction_worker:

https://lfoppiano-document-qa.hf.space/

Introduction

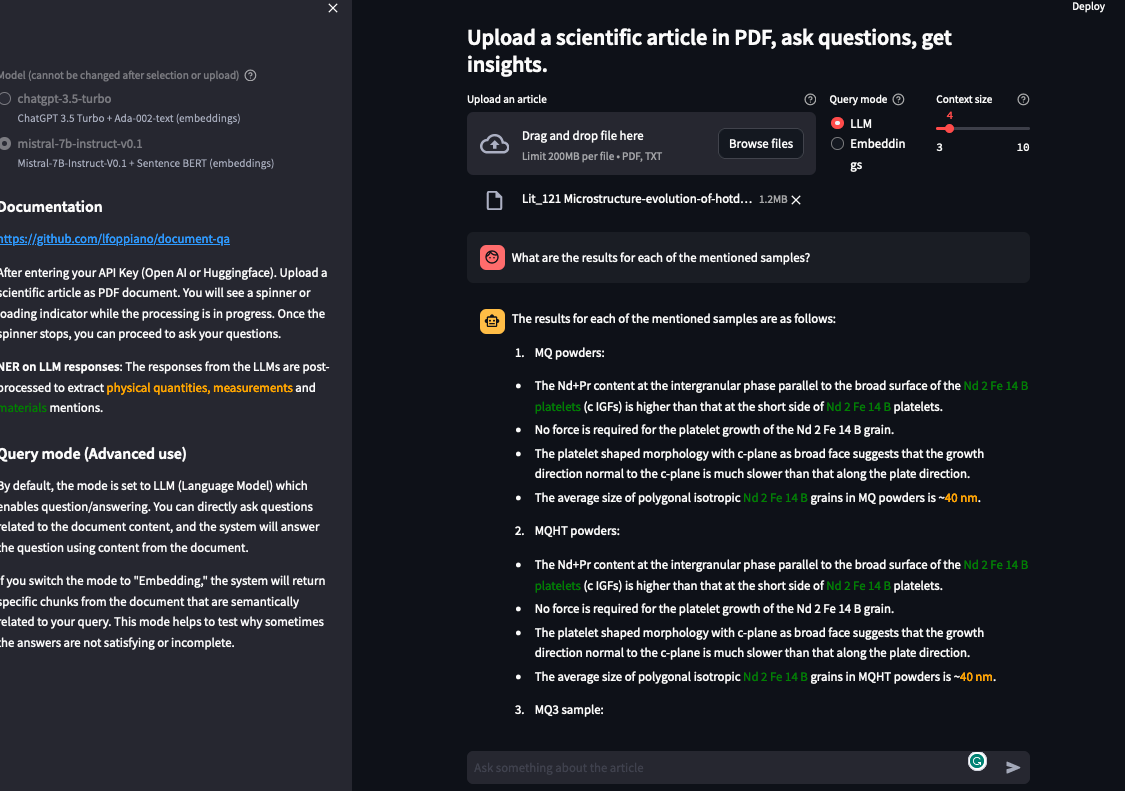

Question/Answering on scientific documents using LLMs: ChatGPT-3.5-turbo, GPT4, GPT4-Turbo, Mistral-7b-instruct and Zephyr-7b-beta. The streamlit application demonstrates the implementation of a RAG (Retrieval Augmented Generation) on scientific documents. Different to most of the projects, we focus on scientific articles and we extract text from a structured document. We target only the full-text using Grobid which provides cleaner results than the raw PDF2Text converter (which is comparable with most of other solutions).

Additionally, this frontend provides the visualisation of named entities on LLM responses to extract physical quantities, measurements (with grobid-quantities) and materials mentions (with grobid-superconductors).

(The image on the right was generated with https://huggingface.co/spaces/stabilityai/stable-diffusion)

Getting started

- Select the model+embedding combination you want to use

- If using gpt3.5-turbo, gpt4 or gpt4-turbo, enter your API Key (Open AI).

- Upload a scientific article as a PDF document. You will see a spinner or loading indicator while the processing is in progress.

- Once the spinner disappears, you can proceed to ask your questions

Documentation

Embedding selection

In the latest version there is the possibility to select both embedding functions and LLMs. There are some limitation, OpenAI embeddings cannot be used with open source models, and viceversa.

Context size

Allow to change the number of blocks from the original document that are considered for responding. The default size of each block is 250 tokens (which can be changed before uploading the first document). With default settings, each question uses around 1000 tokens.

NOTE: if the chat answers something like "the information is not provided in the given context", changing the context size will likely help.

Chunks size

When uploaded, each document is split into blocks of a determined size (250 tokens by default). This setting allows users to modify the size of such blocks. Smaller blocks will result in a smaller context, yielding more precise sections of the document. Larger blocks will result in a larger context less constrained around the question.

Query mode

Indicates whether sending a question to the LLM (Language Model) or to the vector storage.

- LLM (default) enables question/answering related to the document content.

- Embeddings: the response will consist of the raw text from the document related to the question (based on the embeddings). This mode helps to test why sometimes the answers are not satisfying or incomplete.

- Question coefficient (experimental): provide a coefficient that indicate how the question has been far or closed to the retrieved context

NER (Named Entities Recognition)

This feature is specifically crafted for people working with scientific documents in materials science. It enables to run NER on the response from the LLM, to identify materials mentions and properties (quantities, measurements). This feature leverages both grobid-quantities and grobid-superconductors external services.

Troubleshooting

Error: streamlit: Your system has an unsupported version of sqlite3. Chroma requires sqlite3 >= 3.35.0.

Here the solution on Linux.

For more information, see the details on Chroma website.

Disclaimer on Data, Security, and Privacy ⚠️

Please read carefully:

- Avoid uploading sensitive data. We temporarily store text from the uploaded PDF documents only for processing your request, and we disclaim any responsibility for subsequent use or handling of the submitted data by third-party LLMs.

- Mistral and Zephyr are FREE to use and do not require any API, but as we leverage the free API entrypoint, there is no guarantee that all requests will go through. Use at your own risk.

- We do not assume responsibility for how the data is utilized by the LLM end-points API.

Development notes

To release a new version:

bump-my-version bump patchgit push --tags

To use docker:

docker run

lfoppiano/document-insights-qa:{latest_version)docker run

lfoppiano/document-insights-qa:latest-developfor the latest development version

To install the library with Pypi:

pip install document-qa-engine

Acknowledgement

The project was initiated at the National Institute for Materials Science (NIMS) in Japan. Currently, the development is possible thanks to ScienciLAB. This project was contributed by Guillaume Lambard and the Lambard-ML-Team, Pedro Ortiz Suarez, and Tomoya Mato. Thanks also to Patrice Lopez, the author of Grobid.