metadata

license: mit

tags:

- generated_from_trainer

metrics:

- bleu

- rouge

model-index:

- name: mbart-large-50-English_French_Translation_v2

results: []

language:

- en

- fr

mbart-large-50-English_French_Translation_v2

This model is a fine-tuned version of facebook/mbart-large-50 on the None dataset. It achieves the following results on the evaluation set:

- Loss: 0.3902

- Bleu: 35.1914

- Rouge

- Rouge1: 0.641952430267112

- Rouge2: 0.4572909036472911

- RougeL: 0.607001331434416

- RougeLsum: 0.6068905123656807

- Meteor: 0.5916610499445853

Model description

This model translates French input text samples to English.

For more information on how it was created, check out the following link: https://github.com/DunnBC22/NLP_Projects/blob/main/Machine%20Translation/NLP%20Translation%20Project-EN:FR.ipynb

Intended uses & limitations

This model is intended to demonstrate my ability to solve a complex problem using technology.

Training and evaluation data

Dataset Source: https://www.kaggle.com/datasets/hgultekin/paralel-translation-corpus-in-22-languages

English Input Text Lengths (in Words)

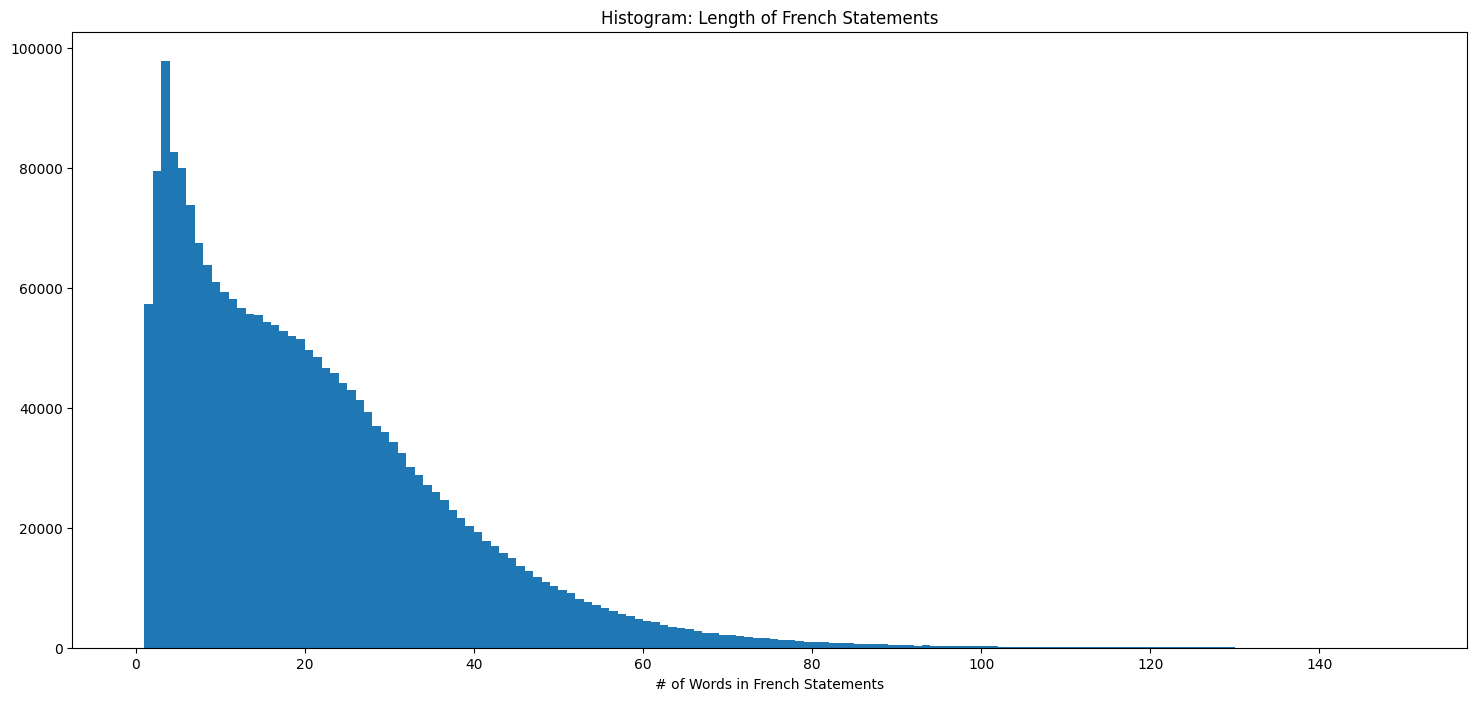

French Input Text Lengths (in Words)

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 2e-05

- train_batch_size: 32

- eval_batch_size: 32

- seed: 42

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- lr_scheduler_type: linear

- num_epochs: 1

Training results

| Training Loss | Epoch | Step | Validation Loss | Bleu | Rouge1 | Rouge2 | RougeL | RougeLsum | Meteor |

|---|---|---|---|---|---|---|---|---|---|

| 1.1677 | 1.0 | 750 | 0.3902 | 35.1914 | 0.6419 | 0.4573 | 0.6070 | 0.6069 | 0.5917 |

- All values in the chart above are rounded to the nearest ten-thousandths.

Framework versions

- Transformers 4.26.1

- Pytorch 1.12.1

- Datasets 2.9.0

- Tokenizers 0.12.1