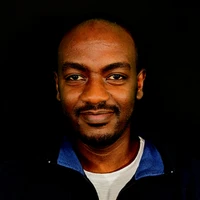

Yosef Worku Alemneh

rasyosef

AI & ML interests

Pretraining, Supervised Fine Tuning, Direct Preference Optimization, Retrieval Augmented Generation (RAG), Function Calling

Organizations

None yet

rasyosef's activity

Phi-2-Instruct-APO: aligned with Anchored Preference Optimization

5

#3 opened 2 days ago

by

rasyosef

rasyosef

APO Trainer in TRL?

1

#2 opened 15 days ago

by

rasyosef

rasyosef

ChatML template does not work properly

10

#2 opened 25 days ago

by

WasamiKirua

WasamiKirua

Mistral-NeMo-Minitron-8B-Chat

3

#5 opened 25 days ago

by

rasyosef

rasyosef

Collaboration

1

#1 opened 24 days ago

by

deleted

Error when trying to run

1

#1 opened 24 days ago

by

ctranslate2-4you

ctranslate2-4you

What changed for people using this model in english?

3

#3 opened 29 days ago

by

migueltalka

migueltalka

Phi 2 Instruct: an instruction following Phi 2 SLM that has undergone SFT and DPO

#132 opened about 1 month ago

by

rasyosef

rasyosef

What should a finetuned model's license be if the model is MIT but the datasets are Apache 2.0 and cc-by-4.0

5

#866 opened about 2 months ago

by

rasyosef

rasyosef

Phi 1.5 Instruct: an instruction following Phi 1.5 model that has undergone SFT and DPO

#89 opened about 2 months ago

by

rasyosef

rasyosef

Update README.md

1

#2 opened 2 months ago

by

seyyaw

seyyaw

Duplicate?

1

#2 opened 4 months ago

by

israel

israel

Model card is about Mixtral-8x7B instead of Mixtral-8x22B

1

#3 opened 5 months ago

by

rasyosef

rasyosef